About Authors

- Kevin He is a Principal at MVP Ventures, where he focuses on infrastructure software and cybersecurity investments from Seed through Series B. He previously helped build the cybersecurity investing practice at WestCap and worked as a Product Manager at Horizon3.ai. He is currently pursuing his MBA at Stanford GSB and holds dual degrees in Business and Data Science from UC Berkeley.

- Lauren Place is an MBA candidate at Stanford Graduate School of Business. She previously launched and scaled zero-to-one products at Wiz, from $100M to over $700M ARR, and at Snyk, from $100M to over $300M. Lauren holds a B.S. from Northwestern University.

- Shachar Ram is an MBA candidate at Stanford Graduate School of Business, where she is conducting research on cybersecurity and identity security as part of her studies. She previously served in Israeli intelligence in cybersecurity and later worked at Cyera, where she helped grow the company from a $500M to a $6B valuation. She holds a degree in Computer Science.

Executive Summary

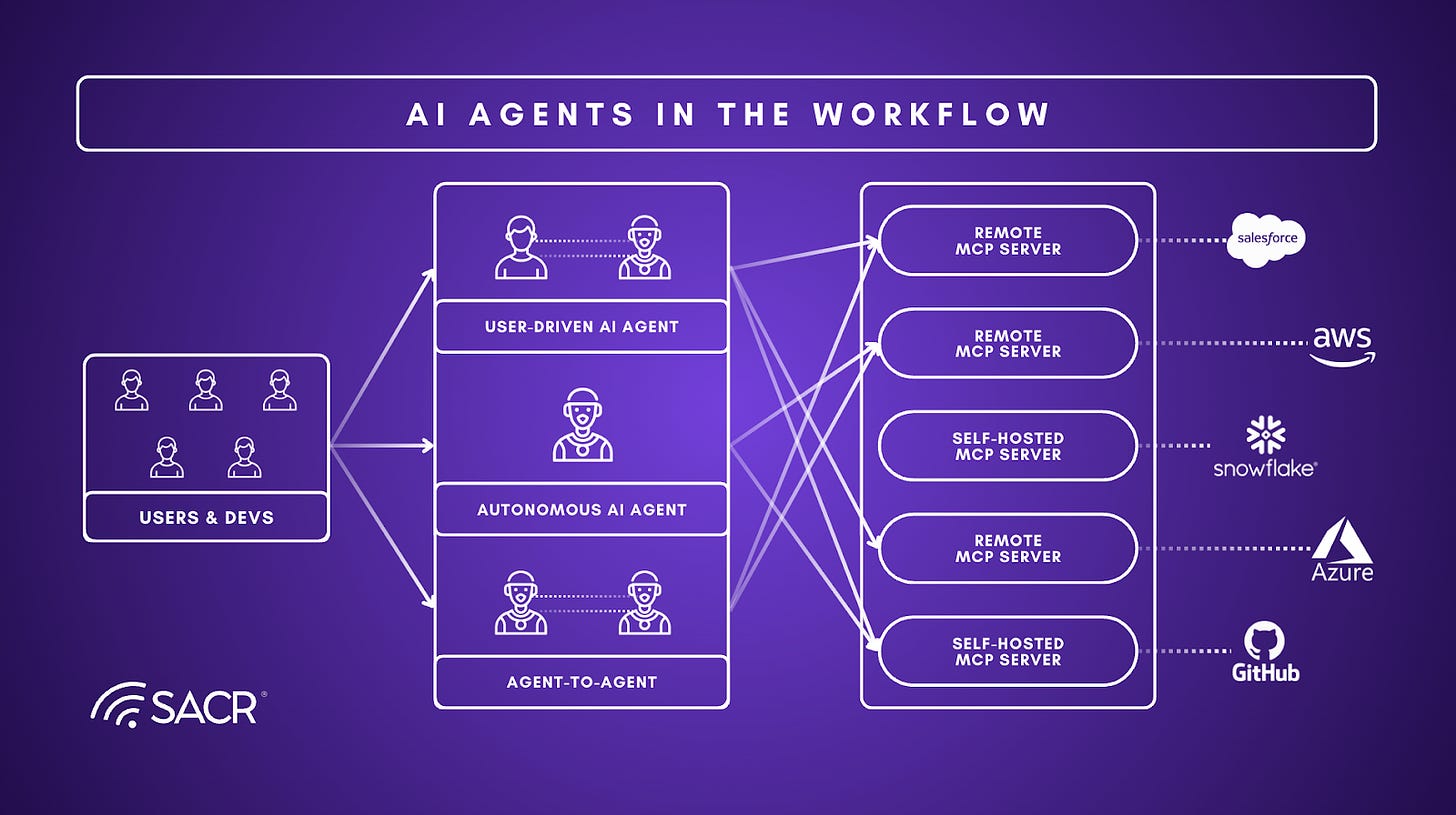

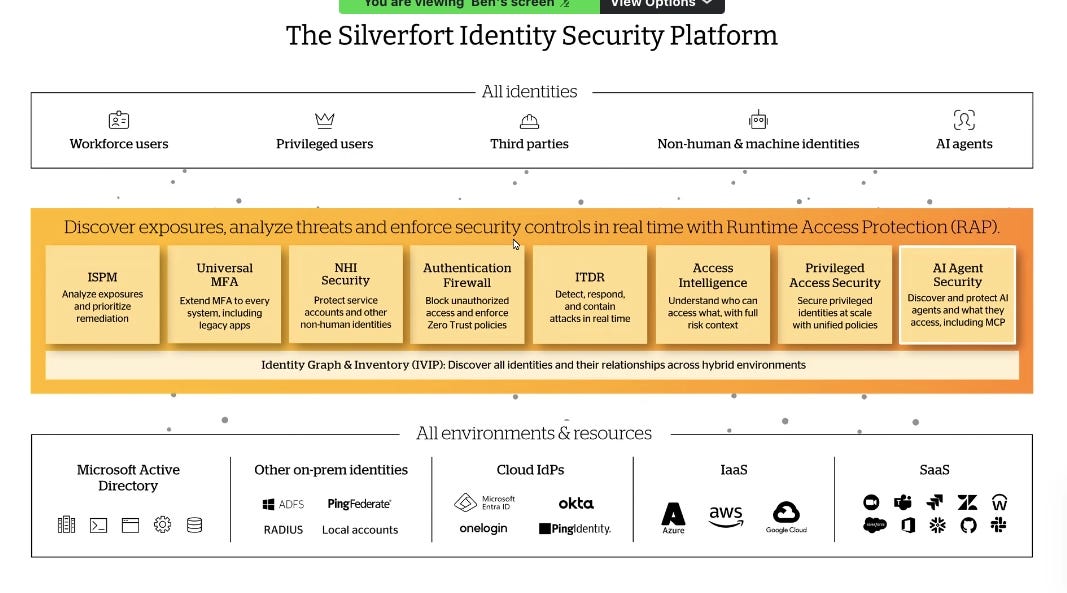

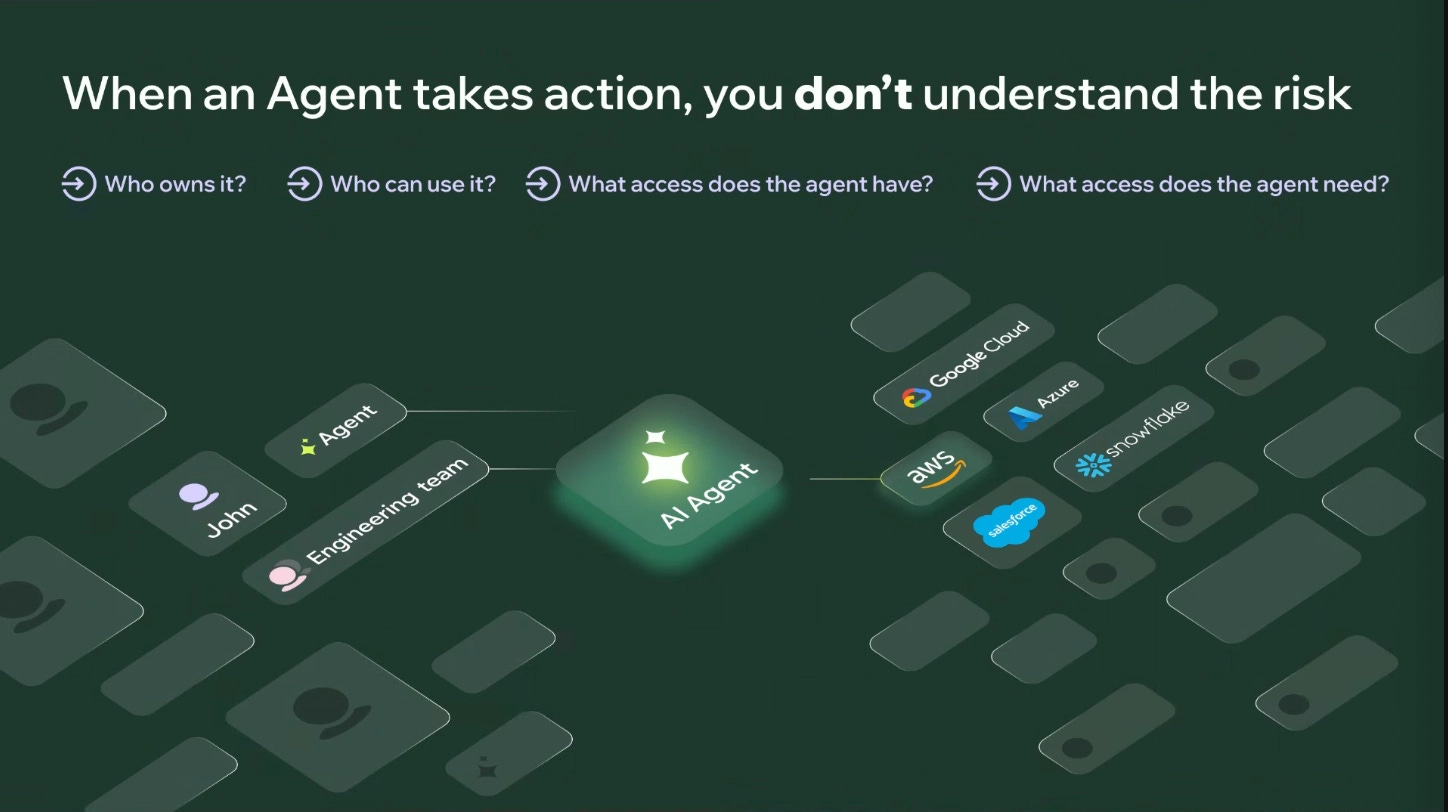

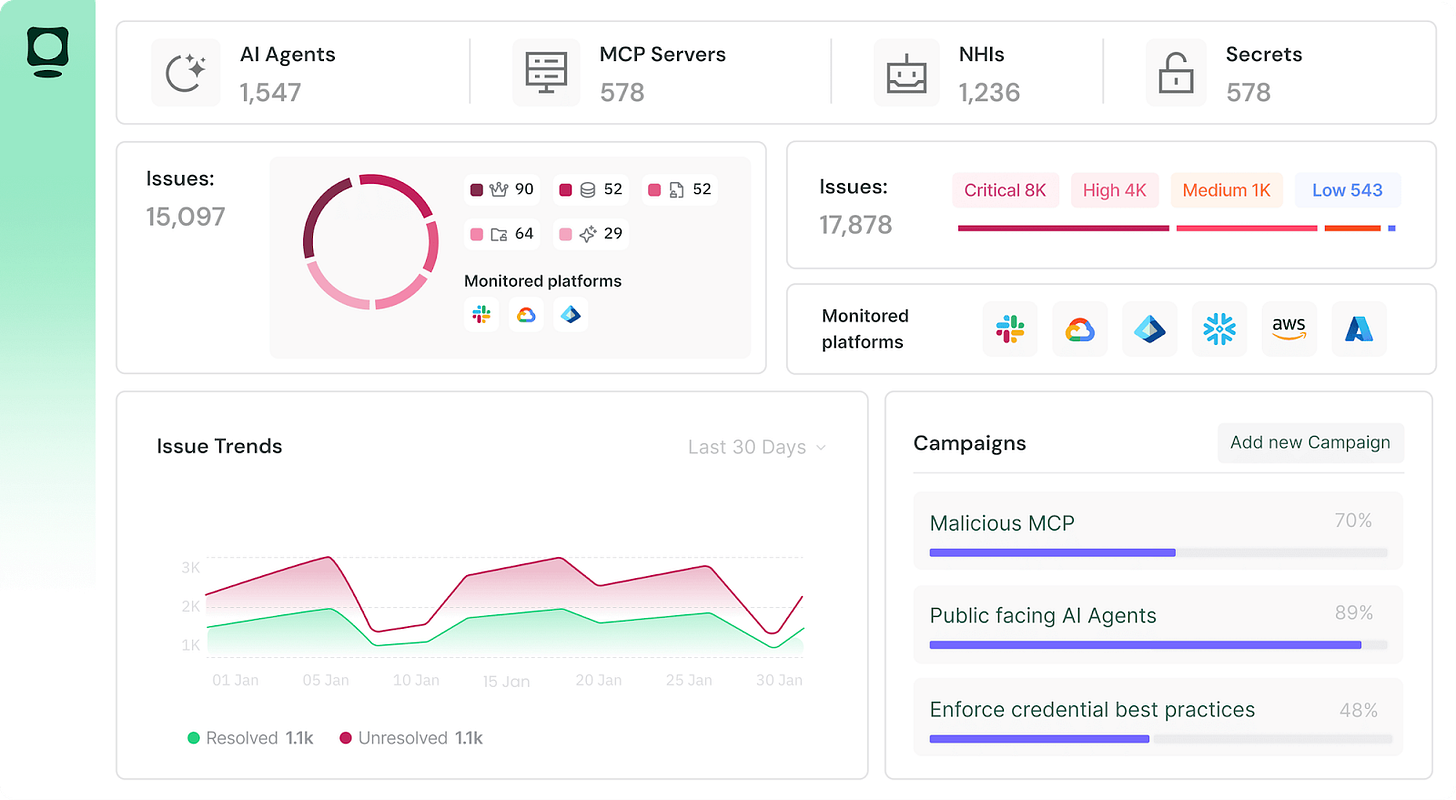

AI agents have moved from experimentation to production deployment across the Fortune 500. With over 3 million agents operating globally and organizations creating thousands per week, the security challenge has shifted from whether to deploy agents to how to secure them at runtime, or the moment an agent decides to act, calls a tool, and touches enterprise data.

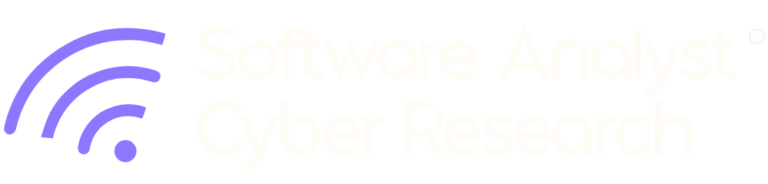

This report builds on SACR’s earlier Emerging Agentic Identity & Access Platforms (AIAP) report, which mapped the agent identity landscape according to the four-phase security framework of discovery, authorization, credential brokering, and runtime enforcement. This follow-up paper focuses specifically on the runtime enforcement piece, or examining governance capabilities for AI agents in production.

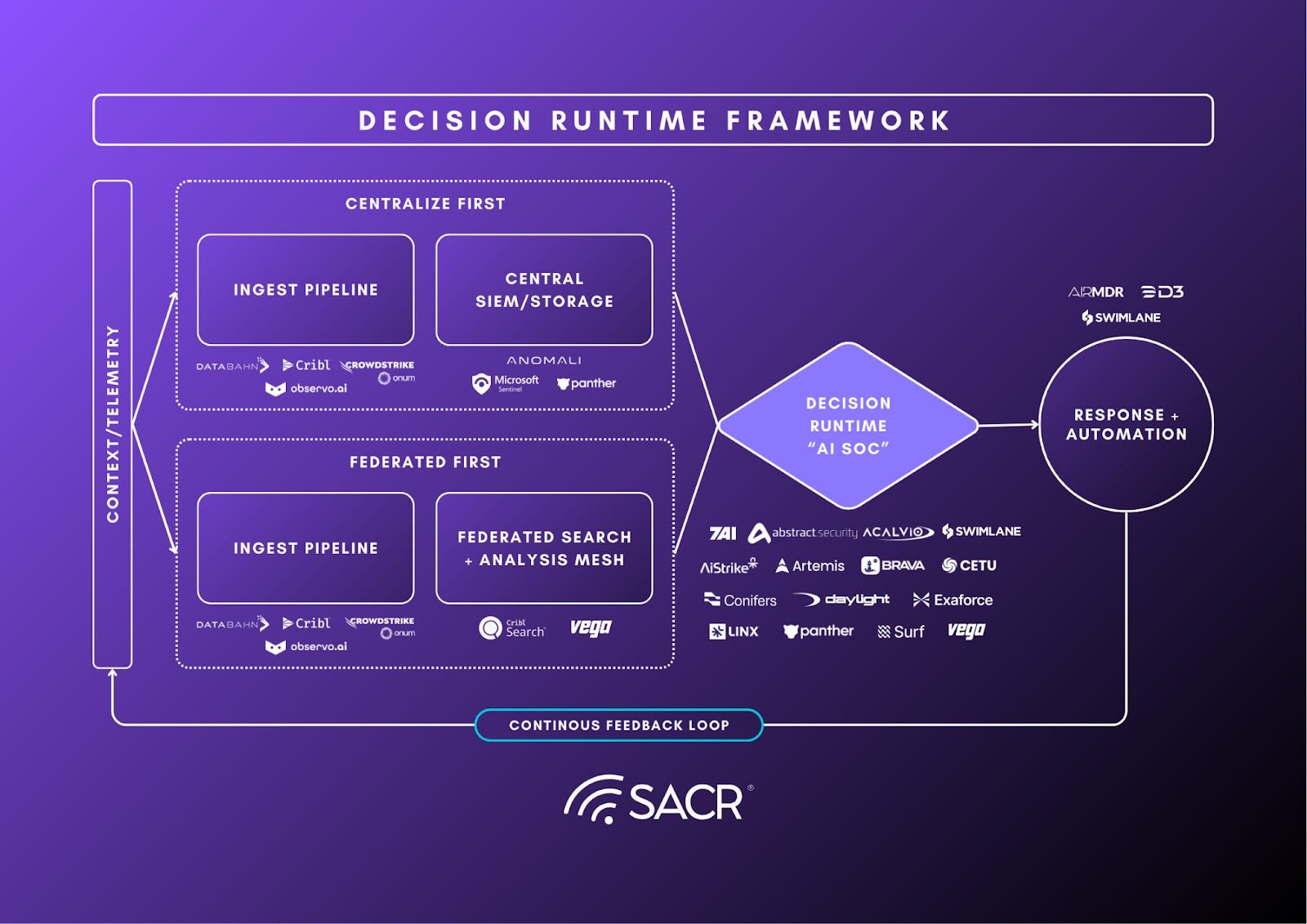

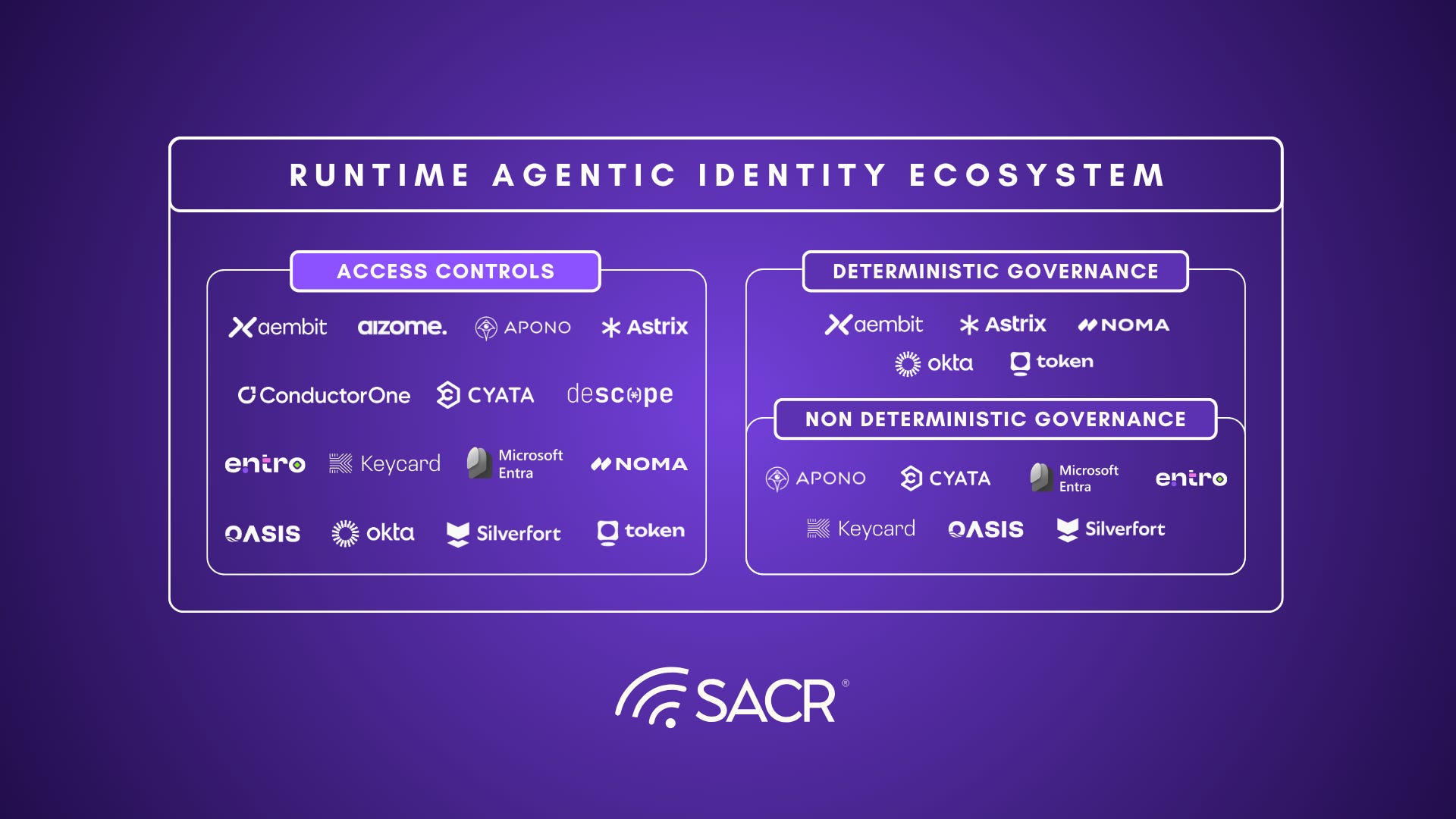

We have identified a three-layer model that reflects how the most advanced identity security platforms are thinking about securing agents at runtime:

Beyond agent discovery, the first layer of securing agentic identities is deterministic governance: a policy engine that defines what an agent can and cannot access. This layer is table stakes, and every vendor in this report has built it. But deterministic governance alone is insufficient. Agents fundamentally display non-deterministic behavior, and an agent can stay within its permitted access boundaries while still doing something unexpected, harmful, or misaligned with its original intent.

The second layer is non-deterministic behavioral analysis, or visibility into agents’ actions and intent. This means understanding not just what resources an agent accessed, but why, and whether that behavior represents a meaningful deviation from the norm. This includes both behavioral tracking and anomaly detection, which surfaces unusual patterns in agent activity over time, and continuous observability, which answers the present-tense question of what an agent is doing right now and whether it should be allowed to continue. This layer provides the visibility and real-time data that make non-deterministic governance possible. Things such as a continuously updated risk score reflecting the agent’s current behavior, intent drift, and access patterns are the raw material that non-deterministic governance consumes to make decisions.

The third layer is non-deterministic governance, or using those real-time signals to make dynamic policy decisions. This means evaluating agent intent at the moment of an access request, acting on live risk scores that shift as agent behavior evolves, and triggering escalation or human review not on a fixed schedule but in direct response to what the agent is actually doing. Within this layer we identified intent-based authorization and dynamic control and escalation as the two key dimensions.

Note: choosing not to implement this third layer is not necessarily a gap. It can be a deliberate architectural decision. Non-deterministic governance introduces its own risks including false positives that block legitimate agent actions and erode trust in the system, and false negatives that create a false sense of security. Some vendors have made a conscious choice to keep their governance layer simple, deterministic, and predictable, accepting that limitation in exchange for a system that behaves reliably and does not introduce new failure modes. This is a reasonable position, particularly for organizations in early stages of agent deployment.

A dedicated case study examines MCP (Model Context Protocol) as a proving ground for these challenges. MCP’s adoption has outpaced its security maturity: 53% of public MCP servers use static secrets, only 8.5% implement OAuth, and tool poisoning attacks succeed at a 72.8% rate in benchmark testing. Four risk layers: Supply chain integrity, Communication Security, Authorization Granularity, and Credential Management, make MCP the most visible attack surface in the agentic identity stack.

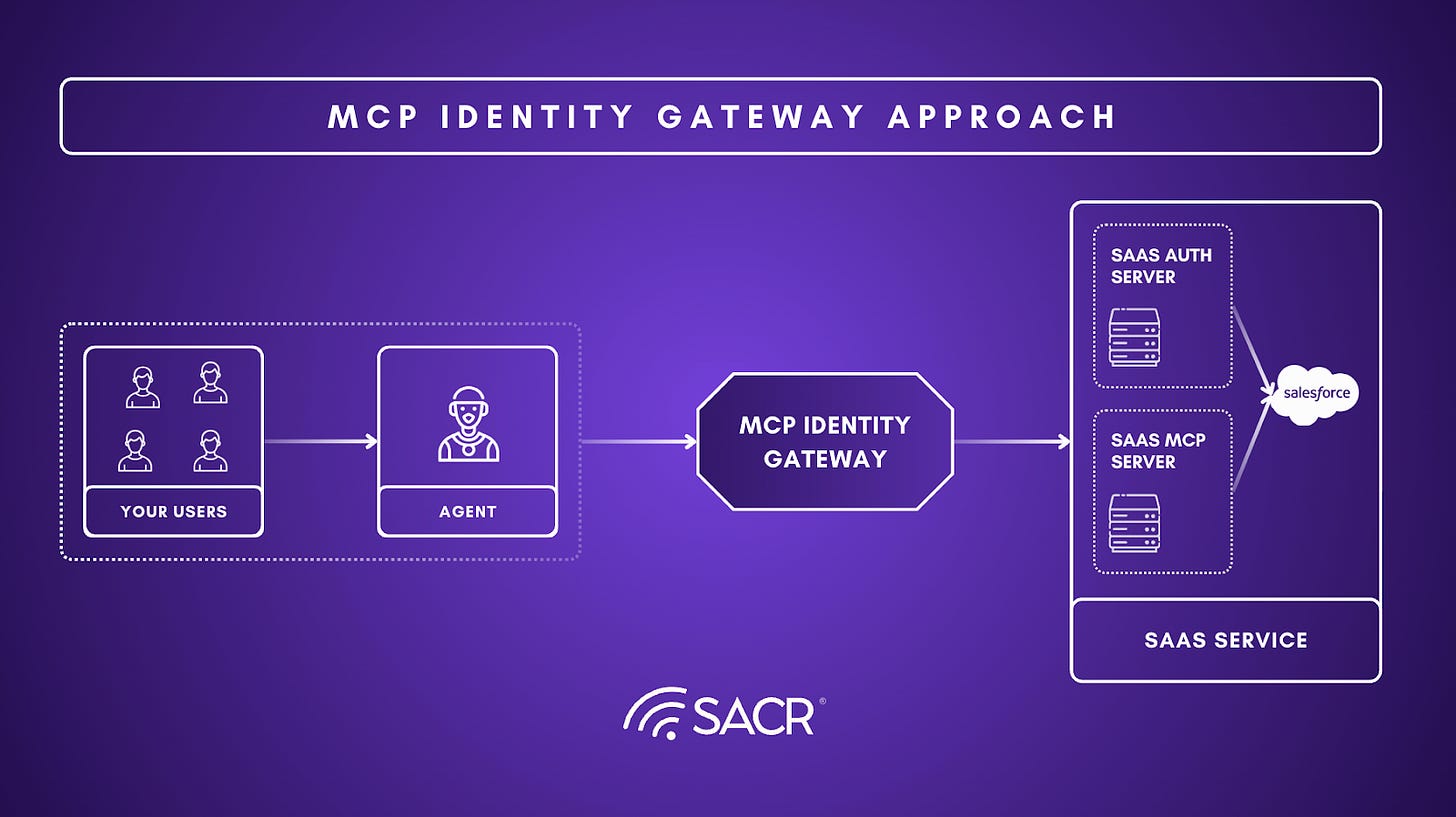

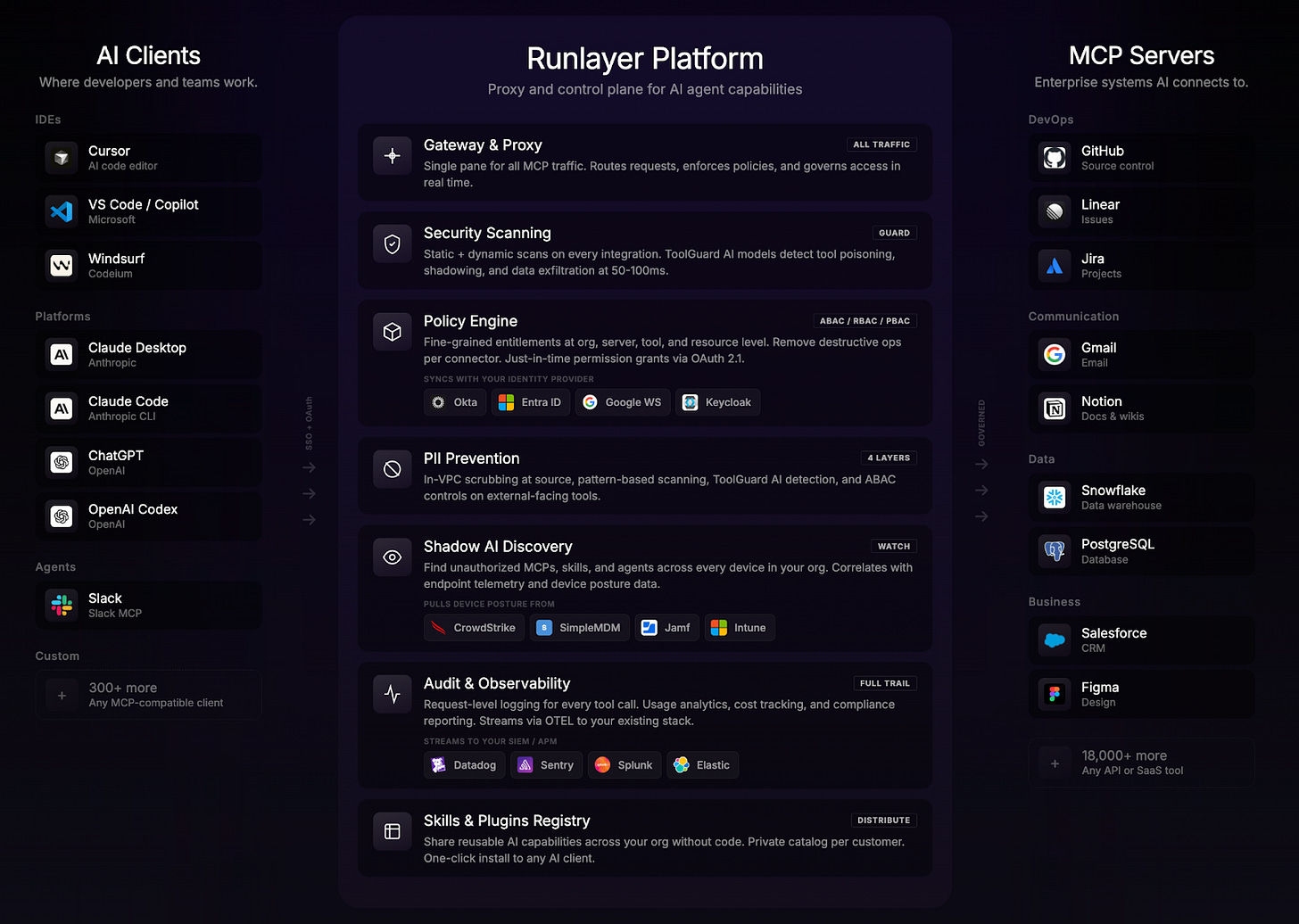

Vendors are taking two distinct approaches to MCP runtime security.

- The gateway approach routes all agent-to-tool traffic through a centralized proxy that validates requests, enforces policy, and injects credentials, enabling deterministic and, in advanced implementations, dynamic policy enforcement. The limitation is visibility: a gateway sees requests and responses, but lacks the deeper context needed to evaluate agent intent.

- The direct-access approach inverts this: by integrating natively with underlying systems, it gains rich visibility into agent behavior and why actions are being taken. The limitation is control: observing behavior is not the same as enforcing policy.

Neither approach is sufficient alone. Comprehensive MCP runtime security requires both: a gateway layer for enforcement, and a direct-access layer for the contextual intelligence that makes enforcement decisions meaningful.

Finally for CISO recommendations, the practical implication of this analysis is that there is no single vendor today that fully solves the runtime security problem for AI agents across deterministic governance, non-deterministic behavioral analysis, and non-deterministic governance capabilities.

Selecting a platform based solely on its deterministic governance capabilities, which is how most security procurement decisions in this space are currently being made, leaves the most dangerous attack surface unaddressed. The questions worth asking of any vendor are not just what can you block, but what can you see, how do you build risk context over time, and how does that context change what you allow. As agent deployments move from pilot to production and from simple single-step tasks to complex autonomous workflows, the organizations that have invested in all three layers will be meaningfully better positioned to detect, respond to, and contain the security failures that are inevitable at scale.

The Challenge with Securing AI Agent Identities at Runtime

As AI agents gain access to sensitive data and production systems, security gaps become most dangerous during execution—when an agent is actively acting on behalf of a user or system. Unlike traditional non-human or workload identities, agents are non-deterministic: their decisions can’t be fully predicted in advance, so legacy security tools built around predictable behavior fall short. Runtime identity security solutions built specifically for agents address this by evaluating an agent’s intent, context, and access rights in real time. This paper examines how the market is responding, what a complete runtime security posture looks like, and how leading vendors are approaching each layer.

Why Runtime Identity Security Is Critical for AI Agents

Traditional security asks: is this person authorized to run this code? Runtime identity security for agents asks a harder question: should this code run, even if this agent is authorized? This shift, from access control to intent evaluation and behavioral analysis, is what makes deterministic governance alone insufficient.

Intent, and not just authorization, must be evaluated at runtime. Agents behave distinctly different from humans and human identities. For example, when a human deletes a file, it’s a conscious act. When an agent deletes a file, it can be responding to a prompt, following a reasoning chain, or recovering from a tool error. The same API call carries different risks depending on whether a human directed it or an agent decided it autonomously.

Intent must be evaluated with each individual action at runtime, not only once when a policy is first defined. When an agent updates a database record, for example, a misinterpreted instruction or prompt injection attack could turn a routine write into data corruption. Catching that requires continuous visibility into what the agent is doing and why. That observability generates the context needed to assess intent, calculate risk, and make dynamic access decisions in real time, enabling organizations to grant least-privilege access while continuously verifying that agent behavior stays aligned with declared goals.

Intent is not static, and so an agent’s behavior must be evaluated continuously throughout its entire lifecycle. An agent that begins by performing simple data retrieval could later execute complex workflows or combine actions in unexpected ways, especially as it chains reasoning steps, integrates new tools, or responds to updated prompts. A one-time grant of even least-privilege access is therefore insufficient. Continuous runtime evaluation is required to ensure that each action remains aligned with current intent and organizational policy, and to trigger dynamic responses when it does not.

The runtime identity security problem for AI agents is magnified by the 144:1 ratio of NHI credentials to human users in typical enterprises. Organizations maintain roughly one human user per 144 machine identities, including service accounts, API keys, application credentials, and workload identities. Each agent requires its own credentials to access resources, and a single human operator can provision multiple agents, each with distinct identities and permissions. When shadow agents or ephemeral instances are included, the number of active identities can quickly reach thousands per team. At this scale, manual oversight or one-time access grants are impossible, making automated, continuous runtime security not just important but essential.

AI Agent Types and Security Considerations

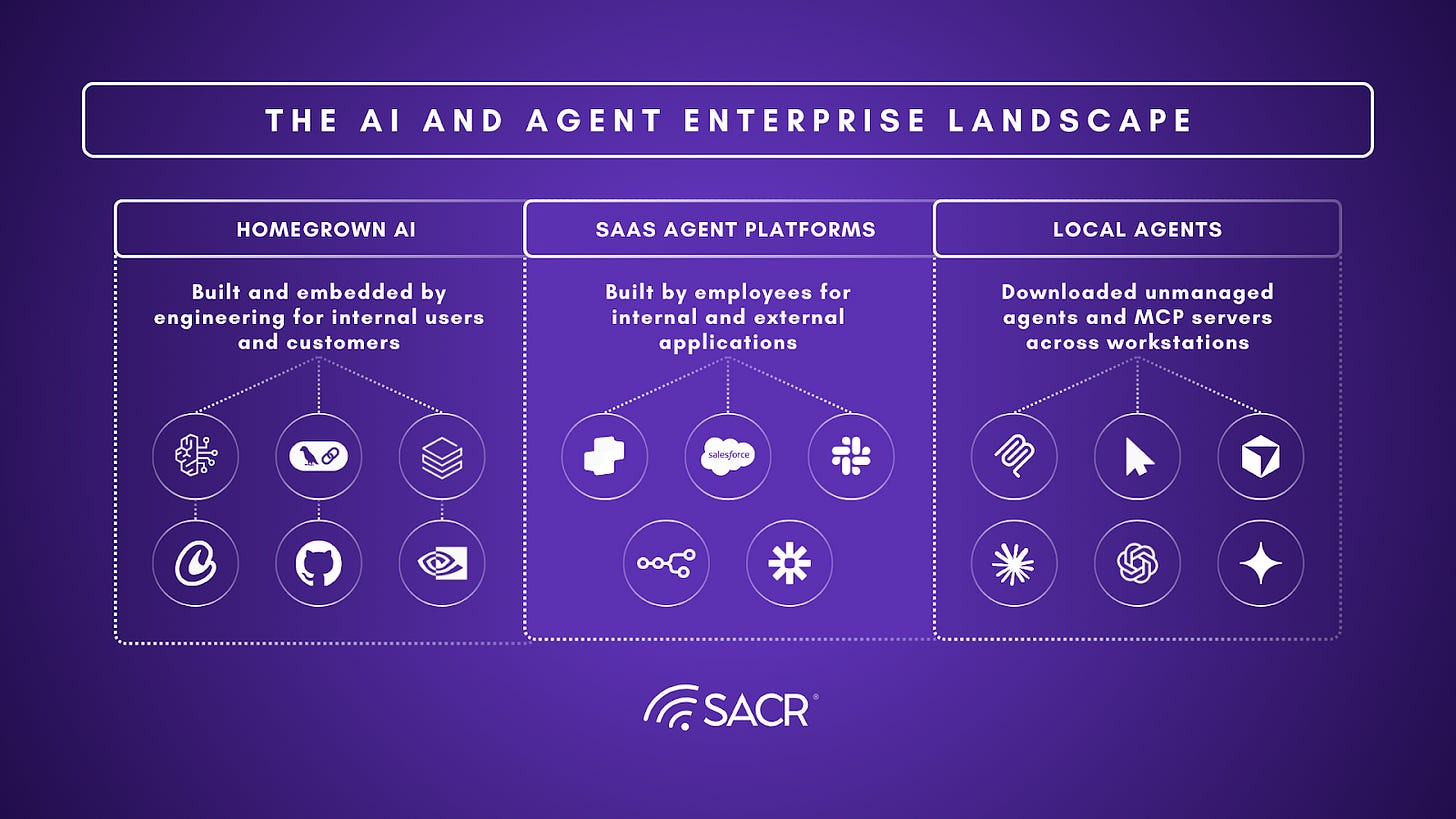

Not all agents present the same security challenges. The enterprise agent landscape breaks into three distinct categories, each with different identity risks, enforcement requirements, and visibility gaps. Understanding which agent types dominate an organization’s environment is the first step in selecting the right runtime controls.

- Homegrown agents are custom-built by engineering teams using cloud services like AWS Bedrock or GCP Vertex, data platforms, or open-source frameworks like LangChain. They run in managed infrastructure and give security teams the most architectural control, but the primary risk is scale: engineering teams can spin up hundreds of agents across multiple repositories and cloud accounts, creating sprawl that outpaces centralized governance.

- SaaS agent platforms like Microsoft Copilot Studio, Salesforce Agentforce, and ServiceNow allow non-technical employees to build and deploy agents through low-code interfaces. This is where the maker identity problem becomes acute: when a business user creates an agent using their own credentials, every subsequent user’s actions execute under the creator’s identity, meaning the agent inherits standing access that downstream users may not be entitled to.

- Local and agentic workforce tools represent the fastest-growing and least-visible category. Developer tools like Cursor, Claude Code, and Windsurf run directly on employee workstations, connecting to community MCP servers that may never touch enterprise infrastructure. These agents are effectively invisible to cloud-based security controls: they do not route through corporate proxies, do not register in cloud IAM, and often store credentials in plaintext configuration files on the endpoint. This category represents the largest blind spot in most enterprise agent security programs today.

The security implications differ across all three categories, but the underlying principles are consistent: organizations need to discover agents wherever they run, understand whose identity they operate under, enforce least-privilege access dynamically rather than statically, and monitor behavior at runtime for drift and anomalies.

Four Runtime Dimensions

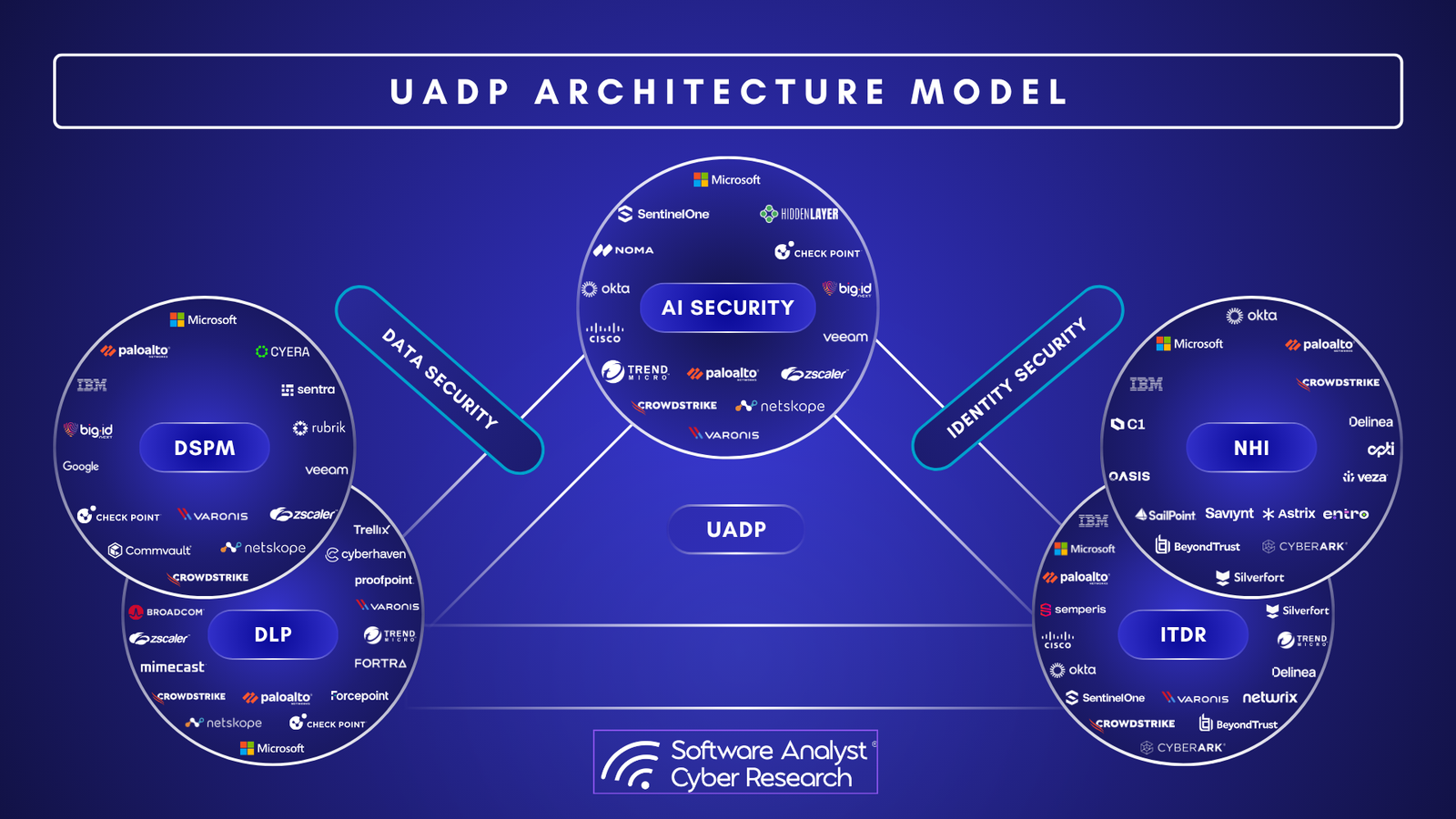

Beyond the baseline of deterministic access control that every vendor in this report implements, we identified four dimensions that define a complete runtime security posture, organized into two categories. The first is analysis of non-deterministic behavior, which encompasses Continuous Observability and Behavioral Tracking. These dimensions provide the visibility and risk context that make meaningful runtime decisions possible. The second category is non-deterministic governance, which encompasses Intent-Based Authorization and Control & Escalation.

1. Continuous Observability

When something goes wrong with an AI agent, the first question security teams ask is what happened and why. Continuous observability provides the infrastructure to answer that question. Beyond simple logging, this dimension captures session-level telemetry that records not just what an agent did, but the reasoning behind each action, the context it was operating in, and the user or system that initiated the session. That attribution chain is critical. Security and compliance teams need to be able to trace any agent action back to a specific user, a specific intent, and a specific moment in time. Without it, investigating an incident becomes guesswork. This contextual data is also what feeds the non-deterministic governance layer. As organizations deploy more agents across more workflows, observability becomes the foundation for accountability across the entire agent workforce, ensuring that autonomous and user-driven agents alike leave a clear and reconstructable record of every decision they made.

2. Behavioral Tracking

Agents are not static programs. Their behavior shifts based on context, instructions, and the tools available to them, which means traditional signature-based security controls will miss a significant class of threats. Behavioral tracking addresses this by establishing a baseline of what normal looks like for a given agent: how frequently it calls APIs, how much data it moves, which tools it typically uses, and in what sequence. When an agent deviates from that baseline, such as suddenly reading thousands of files, calling an external API it has never used before, or moving an unusual volume of data, the system has a meaningful signal to work with. Even without non-deterministic governance in place, that signal is useful: security teams can receive alerts, get recommendations for tightening existing policies, and use behavioral data to refine access rules over time. For organizations that do implement the non-deterministic governance layer, behavioral tracking becomes the essential input: a real-time risk score is only as good as the behavioral data behind it, and dynamic escalation policies can only respond meaningfully to drift if there is an established baseline to drift from.

3. Intent-Based Authorization

Traditional security controls ask whether an agent is allowed to perform an action. Intent-based authorization asks why. Even when an agent has the technical credentials to call an API or access a system, its reasoning must stay within the boundaries defined for a given task. This is the first dimension of non-deterministic governance, and it depends directly on the observability and behavioral data generated by the two preceding dimensions. Without a continuous picture of what the agent is doing and how its behavior compares to its baseline, intent evaluation has no context to work with. In practice, vendors implementing this capability evaluate the agent’s stated intent in real time, dynamically granting or withholding specific privileges for that session rather than relying on static access rules set at deployment. An agent instructed to retrieve a customer record has a different intent than one instructed to export all customer records, even if both actions are technically within its permissions. Intent-based authorization is what makes that distinction enforceable at runtime, shifting the security model from a one-time permissions check to a continuous audit of whether the agent’s reasoning remains aligned with its authorized purpose.

4. Control & Escalation

Control and escalation is the dimension that closes the loop on the entire three-layer model. Where intent-based authorization makes dynamic access decisions, control and escalation takes dynamic action when those decisions are not enough. This means pausing an agent mid-execution, routing a pending action to a human for approval, or terminating a session entirely based on what the agent is doing right now. What makes this dimension distinct from static circuit breakers is that the escalation thresholds themselves are dynamic. Rather than hardcoding which actions always require approval, teams can configure thresholds that adapt based on the agent’s current risk score, the sensitivity of the resource being accessed, or drift flagged by behavioral tracking. An agent whose risk score has been climbing across a session is treated differently than one performing its first anomalous action. When thresholds are crossed, the agent halts and waits for human review. If no approval comes, or if the situation warrants immediate action, the session is terminated.

No vendor provides equal strength across all four dimensions. Understanding where each vendor excels and where they fall short is essential for CISOs building a runtime security strategy.

The MCP Security Case Study: A Deep Dive

What Is MCP?

The Model Context Protocol (MCP) defines a standardized interface for AI models to request contextual information from external systems. Rather than hardcoding credentials, connection strings, and API logic into model prompts, MCP externalizes these capabilities into servers that the model can query. This architectural separation has become the primary protocol for agent-to-resource communication in developer platforms like Cursor and Claude Code.

MCP is not inherently insecure. The protocol itself includes mechanisms for authentication, authorization, and credential isolation. However, the barrier to deploying MCP servers has become so low that a significant portion of the public MCP ecosystem (the Smithery registry and similar repositories) contains servers written without baseline security practices.

The MCP Security Landscape

Plaintext credentials are endemic. 53% of publicly available MCP servers rely on insecure static secrets hardcoded into configuration or source code. The Smithery registry has documented instances of PostgreSQL passwords, API keys, and AWS credentials stored as plaintext in repository commits. Organizations that instantiate these servers inherit the credential exposure without knowing it.

Tool poisoning attacks have high success rates. The MCPTox benchmark demonstrated a 72.8% success rate for tool poisoning attacks, where malicious MCP servers inject false results or manipulated outputs to influence agent decisions. Most agents do not validate tool responses for plausibility, making them vulnerable to poisoned data from compromised MCP servers.

Credential practices at scale are insecure. Only 8.5% of MCP servers use OAuth, leaving the vast majority reliant on insecure credential practices. This includes hardcoded secrets, unencrypted configuration files, and credentials passed through environment variables without access controls.

MCP Inventory, Discovery, and Governance

Before organizations can secure MCP usage at runtime, they need a reliable way to inventory and govern MCP servers and tools. In practice, this means maintaining a registry of approved MCP servers, knowing which servers and tools are being used by which agents, and enforcing centralized policies for allow/deny decisions, permissions, and lifecycle management. The emerging MCP ecosystem is already moving in this direction: the official MCP Registry is designed as a standardized metadata layer for discovering servers, while enterprise control planes increasingly treat MCP servers and tools as governable resources rather than ad hoc developer-side integrations. In other words, MCP security is not only about blocking malicious tool calls; it is also about ensuring that unapproved servers are never introduced silently, that approved servers are onboarded through a review process, and that tool access can be centrally audited, updated, or revoked as organizational policy changes.

Why MCP Security Matters for Agent Runtime

MCP security matters because MCP is increasingly one of the primary ways agents operate at runtime. In many modern agent deployments, the most consequential actions an agent takes during execution, such as retrieving enterprise context, querying data sources, invoking external tools, and taking action in downstream systems happen through MCP servers. In other words, MCP is not just a configuration convenience or developer abstraction; it is often the operational interface between the agent and the outside world. That makes MCP security a runtime problem in the most direct sense: if an MCP server is malicious, overprivileged, poorly authenticated, or loosely governed, the agent’s live behavior can be compromised even if the model itself is functioning as intended.

Within runtime, if mismanaged, MCPs introduces a wild west ecosystem where agents can dynamically discover and use third-party tools, creating several critical risks across the following four layers:

Supply Chain Risks: Because the MCP environment is largely unregulated, organizations face the risk of “tool poisoning” or backdoors in community-developed servers. Malicious or untrusted MCP servers can be intentionally designed to hijack an agent’s reasoning or exfiltrate sensitive credentials. Organizations are not equipped to audit every MCP server dependency in the same way they audit software dependencies.

Communication Security Risks: During runtime, the communication channel between an agent and an MCP server is vulnerable to indirect prompt injection. This occurs when an agent reads data that contains instructions to override the agent’s original goals. Without a “firewall” layer, this can lead to sensitive data leakage as the agent is tricked into transmitting internal PII to an external, untrusted MCP tool.

Authorization Risks: Traditional security often grants agents overly broad “standing access” to MCP servers, enabling them to perform any action the tool allows. This lacks the granular control needed to distinguish between safe actions (reading a file) and dangerous ones (deleting a production database).

Credential Management Risks: MCP servers overwhelmingly rely on long-lived static secrets (API keys, tokens) rather than modern credential exchange protocols like OAuth. When an agent authenticates to an MCP server using a static credential, that secret is often embedded in configuration files, shared across sessions, and never rotated. If any single MCP server is compromised, the attacker gains persistent access to every downstream resource that credential unlocks. This is compounded by the “maker identity” problem, where an agent operates under its creator’s static token rather than the end user’s scoped session, meaning a compromised credential exposes not just one user’s data but potentially an entire team’s.

The Architectural Question: Gateway vs. Direct-Access Monitoring

Two enforcement approaches emerged for managing MCP-layer risk:

MCP Gateway: Agents do not directly connect to MCP servers. Instead, all MCP communication flows through a centralized gateway that validates server responses, enforces policies, and prevents credential exposure. This architecture provides centralized control and the ability to inspect tool results for poisoning while preventing access to unapproved MCP servers. However, it requires significant architectural changes, introduces a single point of failure, and adds latency to every tool call within the agent’s reasoning cycle.

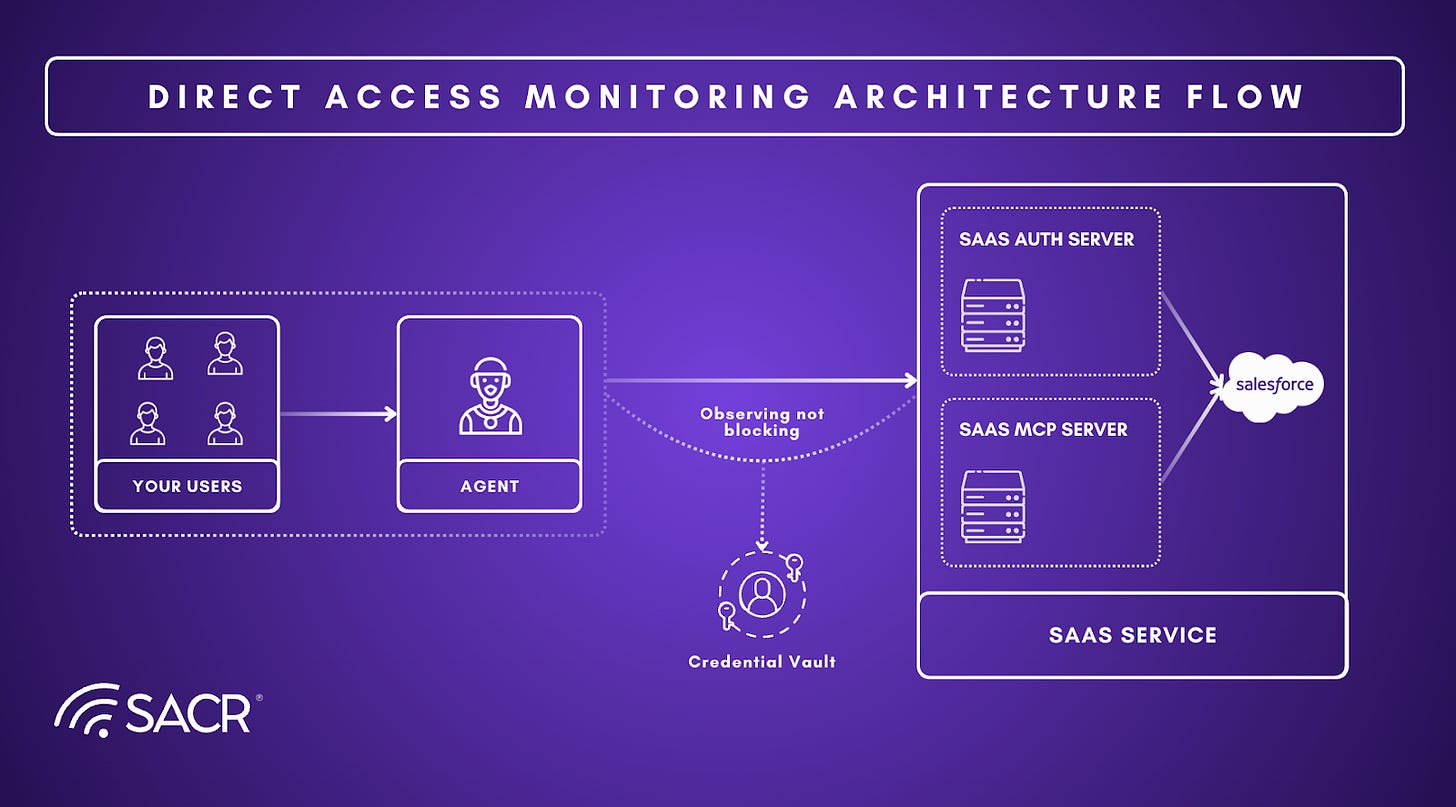

Direct-Access Monitoring: Agents connect directly to MCP servers, but MCP server credentials are managed centrally (vaults, rotated regularly, scoped to specific tasks). Post-execution monitoring detects anomalies and credential misuse. Direct-Access Monitoring preserves agent autonomy and minimizes latency by avoiding a single point of failure in the communication path, yet it remains fundamentally reactive since post-execution monitoring may only identify poisoned tool responses or credential misuse after an unauthorized action has already occurred.

Currently, the majority of vendors have implemented the MCP Gateway approach, as it offers a familiar “choke point” for enforcing security policies. However, a distinct group of vendors argues that this method lacks the deep execution context, such as the agent’s internal reasoning or the specific user session history, needed to make highly accurate security decisions. Consequently, these vendors are pursuing Direct-Access Monitoring, favoring a strategy that integrates more closely with the agent’s runtime environment to capture richer context, even if it requires more sophisticated detection capabilities.

The difference between these two approaches maps directly onto the three-layer framework. The gateway approach is fundamentally a governance mechanism: it sits in the communication path and enforces deterministic policy, and in more advanced implementations it can apply dynamic controls based on real-time risk signals. What it lacks on its own is the deep visibility into agent behavior and intent that the observability layer requires. It can block or allow a request, but without access to the raw reasoning and context behind that request, it has limited ability to build the behavioral baselines and risk intelligence that non-deterministic governance depends on.

The direct-access approach inverts this: by integrating natively with the underlying systems, it gains rich contextual data about what agents are doing and why, generating exactly the kind of signal that feeds meaningful intent evaluation and behavioral analysis. But observing behavior is not the same as controlling it. Direct-access monitoring is inherently reactive, identifying poisoned tool responses or credential misuse after the fact rather than intercepting them in real time.

Neither approach eliminates MCP-layer risk on its own.

Critiques of MCP Gateways

MCP gateways face several structural limitations that may constrain their long-term effectiveness as the sole enforcement point for agentic runtime security.

The Shadow Agent Problem: MCP gateways operate as inline proxies: they can only enforce policy on traffic that routes through them. In practice, most enterprises have no reliable mechanism to guarantee that every agent in the organization communicates exclusively through a designated gateway. Shadow agents, those built ad hoc by developers, spun up in notebooks, or deployed through SaaS platforms without security team oversight bypass the gateway entirely. This is analogous to the limitations of traditional network firewalls before the rise of endpoint detection: if the traffic never hits the chokepoint, the chokepoint is irrelevant. As one vendor noted during our research, organizations are already discovering tens of thousands of agents in their first visibility scans, many of which were unknown to security teams. A gateway that secures only the agents it knows about provides a false sense of coverage.

Lack of Session Context: MCP gateways typically evaluate each tool call in isolation, inspecting the request payload, applying allow/deny rules, and forwarding or blocking. What they cannot do is reason about the broader context of an agent’s session: what instructions the agent was given, what actions it has taken so far in the current conversation, or how this request compares to the agent’s historical behavior patterns. There are some workarounds, but they provide limited visibility. This is a critical gap. A file deletion request may be perfectly legitimate in one session context (a user-directed cleanup task) and deeply suspicious in another (an agent that has been hijacked via indirect prompt injection mid-conversation). Without access to conversation history, agent instructions, and identity provider integrations, a gateway is making authorization decisions with incomplete information, effectively enforcing static rules against a dynamic, context-dependent threat surface.

Protocol Obsolescence Risk: Perhaps the most consequential challenge facing MCP gateways is the possibility that the MCP protocol itself becomes a transitional technology rather than a permanent standard. Developers are already beginning to shift toward “skills”-based architectures, where agents communicate directly with downstream APIs (e.g., calling the Notion API, Salesforce API, or GitHub API natively) rather than routing through the MCP protocol layer. Skills-based approaches avoid the performance overhead and cost associated with MCP’s intermediary step, and major platforms are actively investing in these direct-integration models. If agents increasingly bypass MCP in favor of native API calls, an MCP gateway secures a diminishing share of agent-to-tool communication. It becomes, as one security architect described it, a lock on a door that fewer agents walk through each quarter.

Recommendations for CISOs

The central decision every CISO faces when building a runtime security program for AI agents is not which vendor to buy, but which layer of the framework to implement and when. The following recommendations are intended to guide that decision.

Start with deterministic governance, but do not stop there. Every organization deploying agents needs a policy engine that controls what agents can and cannot access. This is the baseline, and without it nothing else works. But CISOs should be clear-eyed that deterministic governance alone will not catch the threats that matter most with AI agents. An agent operating entirely within its permitted scope can still cause significant damage if its intent drifts, if it is subject to prompt injection, or if it combines permitted actions in ways that produce unauthorized outcomes. Plan for the layers above from the beginning, even if you do not implement them immediately.

Invest in observability before governance. The quality of every dynamic decision in the third layer is determined by the quality of the data generated in the second. Before evaluating vendors on their intent-based authorization or escalation capabilities, ask what their observability layer actually captures: does it record agent reasoning and not just actions? Can it attribute every action to a specific user, intent, and moment in time? Can it build behavioral baselines that are specific enough to distinguish meaningful drift from normal variability? A strong governance layer built on weak observability will produce unreliable decisions at scale.

Make an explicit decision about non-deterministic governance. Introducing dynamic, intent-based policy decisions into your security architecture is not a default next step. It is a deliberate choice with real tradeoffs. Non-deterministic governance reduces the risk of agents acting outside their intended purpose, but it also introduces false positives that can disrupt legitimate agent workflows and false negatives that can create a false sense of security. CISOs should assess their organization’s tolerance for both before committing to this layer. For organizations in early stages of agent deployment, a mature observability layer with human-reviewed recommendations may be the more prudent starting point.

Assess your agent archetypes before selecting a vendor. The right runtime security architecture depends heavily on where your agents are running. Homegrown agents in cloud infrastructure have different visibility and enforcement requirements than SaaS platform agents or local developer tools like Cursor and Claude Code. Many vendors have strong coverage for one archetype and limited coverage for others. Map your current and anticipated agent population before evaluating platforms, and prioritize vendors whose enforcement surface matches where your agents actually operate.

Treat MCP security as a distinct requirement. Evaluate whether your vendor of choice takes a gateway approach, a direct-access approach, or both, and understand the tradeoffs of each. Organizations standardizing on MCP should explicitly require coverage of supply chain risk, hard-coded credential detection, and cross-tool exfiltration detection as part of any runtime security evaluation.

Vendor Deep Dives

The remainder of the report grounds this architecture in real-world implementation patterns through case studies and representative vendors. We’ve partnered with 15 vendors who are leading innovation in this new ecosystem. They include the following:

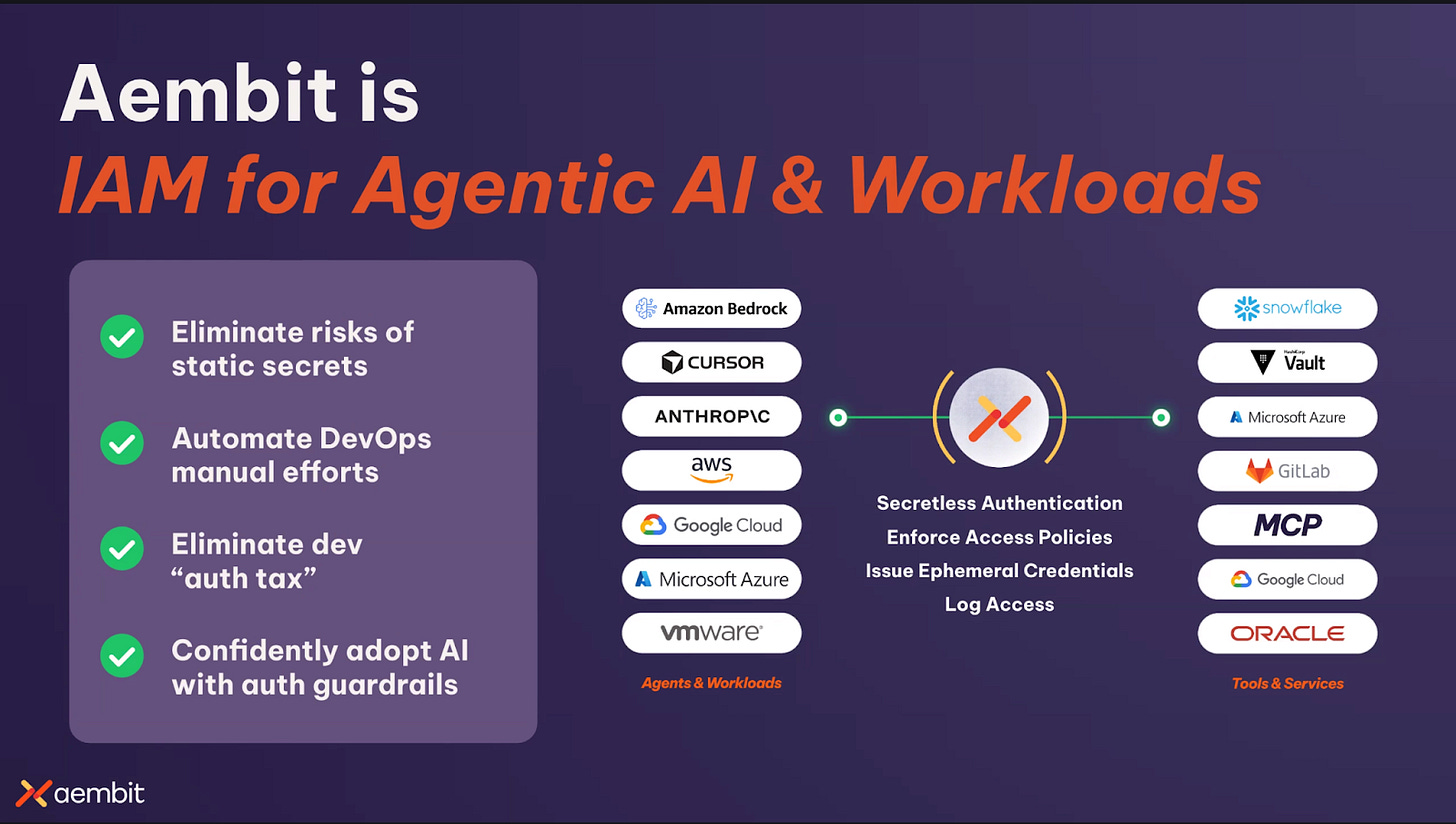

Aembit

Overview: Aembit approaches agent security as a runtime identity and access-control problem. Its core model is built around replacing static credentials with identity-based, policy-driven access in which applications, workloads, and agents receive short-lived credentials at runtime rather than storing secrets directly. In this architecture, the agent does not need to manage or retain the credential itself; Aembit verifies identity, evaluates policy, brokers the appropriate access, and centralizes the resulting audit trail. The platform is therefore best understood as a runtime enforcement layer for non-human identity (NHI) access across workloads, services, and agentic systems.

Discovery: Aembit defines a non‑human identity for each AI agent and optionally binds upstream user context. Policies then enforce least‑privilege access based on the combined agent and human attributes. Blended identity is important because users do not want agents to inherit all their rights; rights should be task‑specific.

At the architectural level, Aembit’s secretless model is designed to reduce both the operational burden and the attack surface associated with static secrets. The platform intercepts outbound access requests, cryptographically attests the workload or agent identity based on the underlying runtime or infrastructure, applies authorization policy, and then issues or retrieves a short-lived credential for the target system. That credential is delivered only for the specific interaction and is not intended to persist in developer-managed workflows or long-lived application configuration. In environments where dynamic credentialing is supported, this materially reduces direct credential handling by developers and operators while creating a more centralized and auditable access path.

Deterministic Governance: Using our runtime-security framework as an analytical lens, Aembit appears strongest at the deterministic governance layer, and in control and escalation and continuous observability. At the deterministic governance layer, its architecture is well aligned to enforcing runtime access boundaries around agent-to-service and agent-to-tool interactions by brokering credentials only at the moment of use and by inserting an identity-aware gateway into MCP request flows. The platform’s emphasis on short-lived access, centralized policy, and circuit-breaker controls maps naturally to control and escalation, particularly in environments where security teams want an immediate mechanism for revoking or halting agent access. Its centralized logging and blended-identity model also support continuous observability by creating a clear record of which user, agent, and service were involved in a given transaction.

One of Aembit’s clearest strengths is the conceptual clarity of its model. Rather than beginning with discovery or post hoc monitoring, it starts from the premise that non-human access should be governed through identity, short-lived authorization, policy evaluation, and centralized control. That framework is especially compelling for organizations that already view agent security as an extension of the broader workload and non-human identity problem. The same architectural principles can be applied across traditional workloads, scripts, cloud services, MCP-connected agents, and other machine-driven access patterns, making the platform well suited to enterprises looking for a unified runtime access layer rather than a point solution focused only on agents.

MCP Security: Aembit extends this model into MCP-mediated agent workflows through a dedicated control plane. Its MCP architecture introduces an identity gateway between agents and MCP-connected services, along with an authorization service that combines workload identity and human identity into what the company describes as a blended identity. This enables access decisions and audit trails to reflect both the software entity and the user operating behind it, which is especially important for user-driven agents acting on behalf of a person. Within this model, Aembit emphasizes separation between agent identity, MCP server identity, and downstream service credentials, with the goal of improving control and traceability across MCP-based interactions.

Analyst recommendation: Aembit is particularly well positioned for organizations seeking to modernize runtime access around agents without forcing developers to handle credentials directly. Its model of cryptographic attestation, ephemeral access, policy-based authorization, and centralized auditability provides a strong foundation for securing agent interactions with sensitive systems and services. For enterprises approaching agent security through the broader lens of non-human identity and runtime access management, Aembit offers a coherent and architecturally consistent approach.

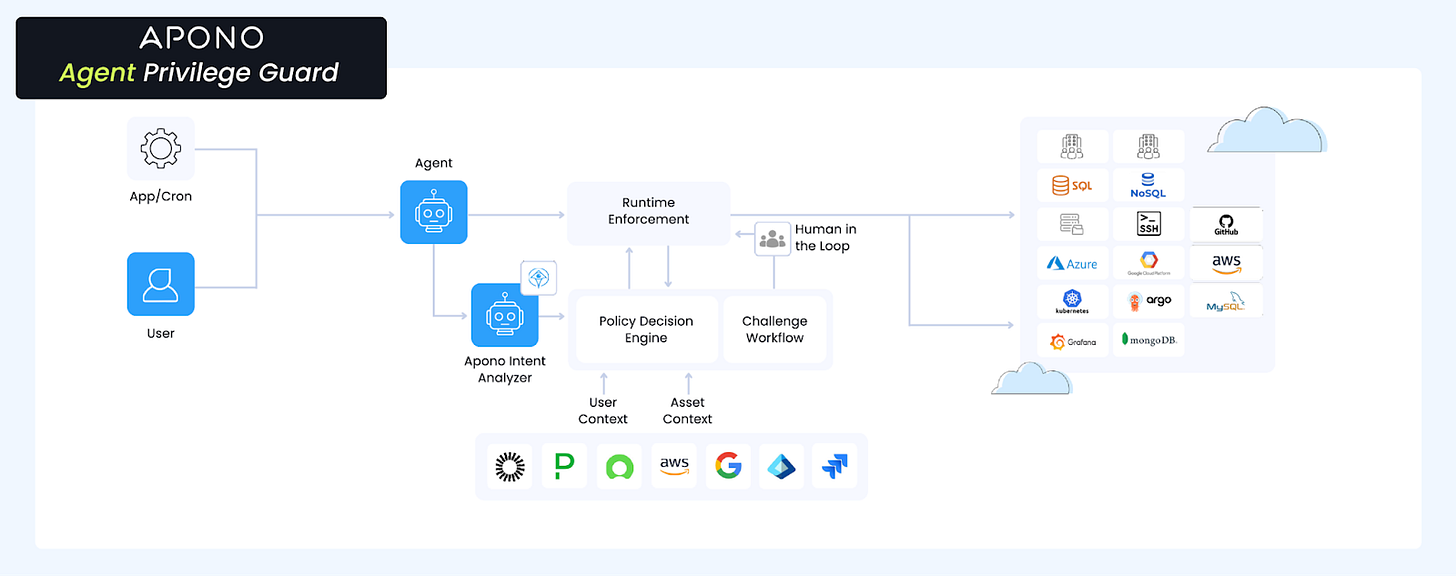

Apono

Overview: Apono’s primary differentiator is the depth of its intent-based access model. Where most platforms treat intent as a signal to inform policy, Apono makes it the central control mechanism. The platform requires agents to declare intent before acting, evaluates that declaration against real-time context including resource attributes, data sensitivity, and environment state, and provisions credentials specifically for that declared operation. The access cycle is continuous: declare intent, evaluate context, grant temporary authority, enforce during execution, revoke, and log.

Deterministic Governance: The architectural foundation reflects a deliberate choice to move beyond static access management. Traditional IAM uses fixed policies that cannot adapt to non-deterministic agents operating at machine speed. Apono’s dynamic policy engine evaluates user context, resource attributes, and environment state in real time, which is the same shift cloud infrastructure required when moving from static server configurations to dynamic provisioning. The on-premises component reinforces this architecture: rather than sitting in the network path, Apono installs a component directly in the customer environment that handles secrets and access grants locally. Customer credentials never touch third-party infrastructure, which matters for regulated industries where data residency is a hard constraint and also avoids the latency and bottleneck risks that proxy-based architectures can introduce at scale.

MCP Security: The Apono MCP gateway wraps all MCP tool interactions, injecting dynamic secrets, scopes, and intent parameters at runtime so agents never possess credentials directly. Policies can be configured at the tool level to allow certain operations while escalating others, giving security teams granular control over what each agent can actually do within a granted session. The platform also provides managed tools for resources like Postgres, Kubernetes, and AWS, offering safe access pathways that sit within the intent and policy framework.

Analysis of Non-Deterministic Behavior: For continuous observability, AI-generated session summaries translate raw audit logs into readable accounts of what an agent did and why, making the audit trail actionable for security teams who cannot realistically parse event-level logs across large numbers of concurrent agent sessions.

Apono recently launched Agent Privilege Lab, which maps agent-specific attack patterns to established frameworks like OWASP and MITRE, and includes an intent-based access control simulator for testing guardrails. A capture the flag environment is also in the works, where practitioners can attempt to socially engineer agents and experience firsthand how agents can be manipulated to cause harm. These resources reflect genuine investment in the broader practitioner community beyond the product itself.

Apono acknowledges one open challenge on their roadmap: translating non-deterministic agent intent into deterministic enforceable scopes. The intent analyzer is itself an agent, which introduces some of the same unpredictability it is trying to govern. This is a hard problem the industry has not solved, and Apono is candid that deeper intent translation is ongoing work.

Non-Deterministic Governance: Intent-based authorization is the core of the platform. Rather than simply blocking actions that fall outside declared intent, Apono evaluates the risk level of the privileges being requested. Low-risk operations proceed uninterrupted, while high-risk privileges that could lead to destructive actions trigger a control and escalation response: a human-in-the-loop challenge that pauses execution until a human approves or rejects the request. This distinction matters because it means agents can complete more work autonomously, with humans brought into the loop only when the stakes warrant it. The platform dynamically provisions the lowest-risk privileges sufficient to complete a given task, ensuring least privilege is enforced not as a static policy but as a continuous, context-aware calculation. This makes control and escalation a natural extension of the intent model rather than a blunt interrupt mechanism.

Analyst Recommendation: Apono is a strong fit for organizations that want intent as the primary control layer rather than a secondary signal, and particularly for those already managing human and non-human access through Apono, where extending the same policy engine to agents is architecturally natural and operationally straightforward. The platform’s approach reflects a broader philosophy of making security teams enablers of the business.

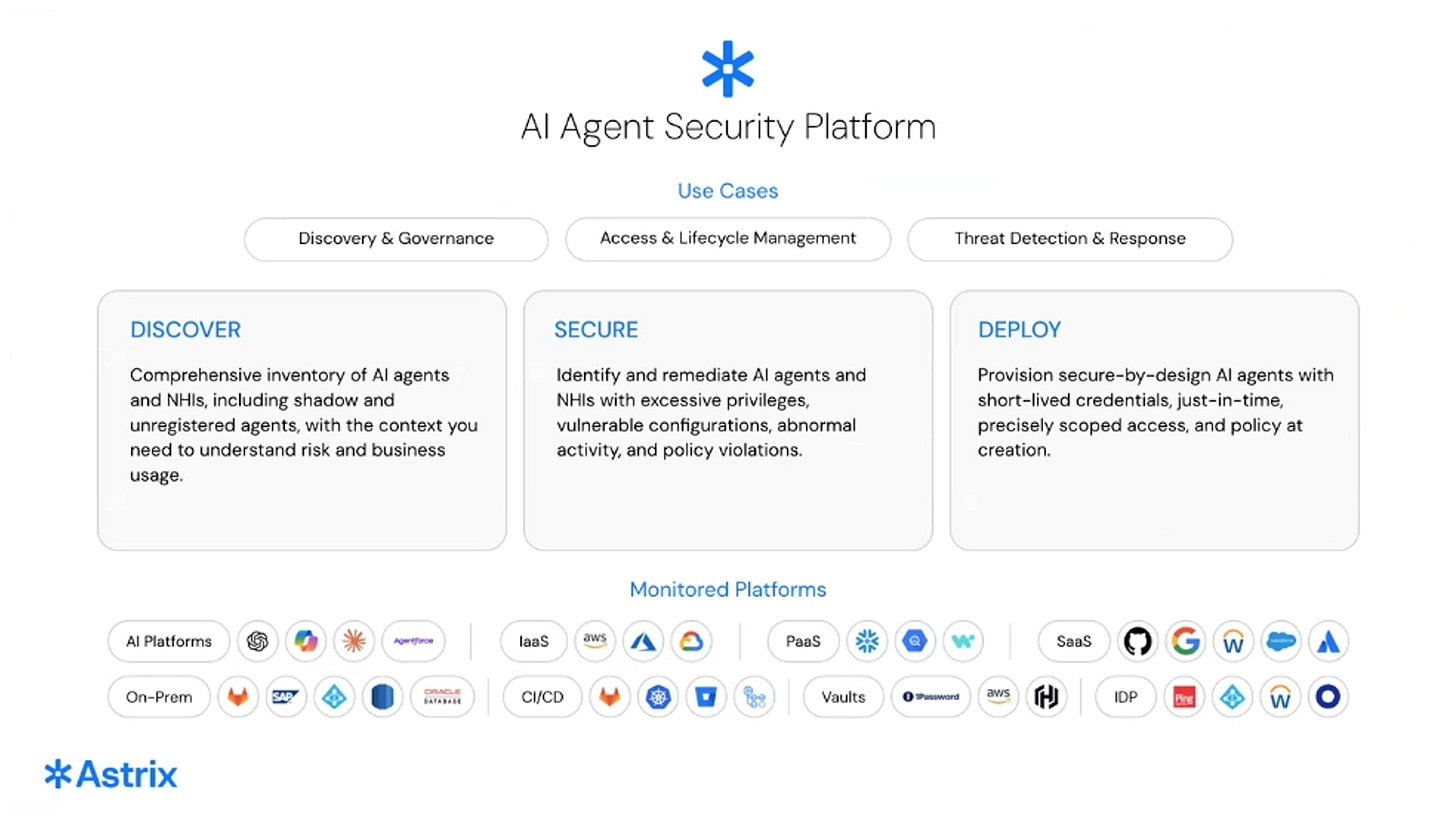

Astrix Security

Overview: Astrix has been building in the non-human identity space for five and a half years, starting with app-to-app security before the NHI category had a name. That history gives their ML threat detection models a competitive advantage that newer entrants cannot easily replicate: training data from millions of real NHI behaviors across years of production deployments, covering a range of environments, access patterns, and attack types that take time to accumulate.

Discovery: Astrix uses fingerprinting to identify agents already running in cloud platforms such as Bedrock agents and custom GPTs, integrates with existing endpoint tools like Defender and CrowdStrike to find local MCP servers without deploying a new agent, and maps third-party integrations for supply chain risk.

Deterministic Governance: Rule-based access policies combined with ephemeral credential provisioning ensure agents operate within defined boundaries without holding persistent credentials. The Agent Control Plane lets developers register agents via Terraform and exchange existing tokens such as Kubernetes OIDC for specifically scoped temporary tokens that expire automatically when the agent is undeployed, improving security without creating a new bottleneck in the development workflow.

Analysis of Non-Deterministic Behavior: Behavioral tracking is where Astrix is strongest. Years of real NHI training data mean the detection models have genuine signal, distinguishing meaningful anomalies from noise in a way that requires time and data volume to build. For continuous observability, an identity graph maps operator-to-agent-to-resource relationships, giving teams the relational context needed to reconstruct what happened during any session and understand how privilege flowed across the agent ecosystem.

MCP Security: Astrix combines both approaches identified in the framework. An MCP gateway provides enforcement and traffic-level control, while hook-based integrations with Cursor and Claude hooks add direct-access observability for local agent environments. Supply chain risk mapping across third-party MCP integrations adds ecosystem-level visibility, and discovery of local MCP servers through existing endpoint tooling means coverage does not depend entirely on routing traffic through a proxy.

Analyst Recommendation: Astrix is a strong fit for security and identity teams that want ML-backed behavioral detection with genuine training depth, and a developer-friendly path to ephemeral access governance that reduces the credential hygiene problems that tend to accumulate quietly in fast-moving engineering organizations.

ConductorOne

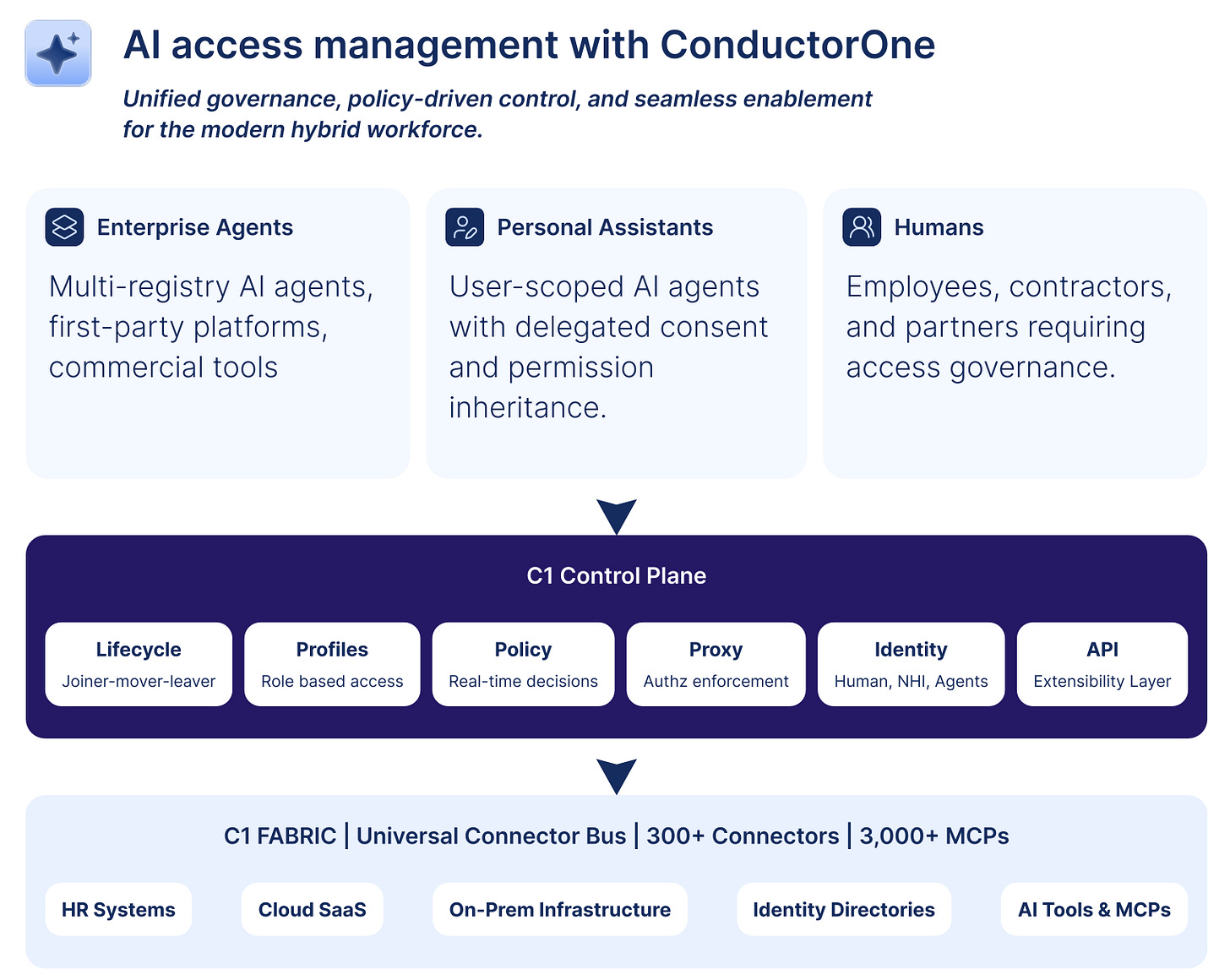

Overview: ConductorOne approaches agent security from the perspective of identity governance and access management. Its core thesis is that the same primitives enterprises already use to govern human access, such as access graphs, entitlement models, certification workflows, self-service requests, and approval policies can be extended to govern agentic access. Rather than treating agents as a separate security category, ConductorOne positions them as another class of principal within a broader access-control system. The company frames the enterprise AI challenge around three related gaps: visibility, governance, and adoption, arguing that visibility alone is insufficient without governed workflows to translate discovery into controlled access distribution.

Discovery: ConductorOne’s platform is designed not just to show where agents and tools exist, but to translate that visibility into requestable entitlements, approval paths, and governed access distribution. With more than 300 prebuilt connectors and over 3,000 prebuilt MCPs already generated for the platform, the company provides broad catalog-level access to tools out of the box.. The same connector-generation techniques developed for broader identity integrations are used to scale MCP coverage, allowing agent-facing tools to slot into an existing governance fabric.

Deterministic Governance: Administrators can register MCP servers, select and approve specific tools, and bundle those tools into profiles that function as governed entitlements. A profile such as a read-only GitHub package can be requested through the same self-service workflow used for human access, routed through approval policies, and granted in a controlled way. This turns MCP tool access into a governable access-management problem. Credentials are managed centrally rather than stored on user endpoints, reducing the need for end users to maintain local secrets.

Non-Deterministic Behavioral Analysis: The platform logs MCP tool usage and exposes audit trails that can be streamed into downstream monitoring systems, making each tool call visible as part of a broader access-governance record.

Non-Deterministic Governance: The same approval machinery used for human access applies to agentic access, with policy-driven approvals, request workflows, and kill-switch controls at the tool, server, or tenant level. ConductorOne argues agent governance should focus on governing privileged operations directly, what an agent is allowed to do, under what policy, and when a human should be pulled into the loop with each discrete MCP tool call treated as a governable action.

MCP Security: ConductorOne’s MCP proxy model is its most distinctive technical element. Approved tools are bundled into profiles functioning as governed entitlements, requested and granted through existing self-service workflows. This reduces the need for employees to source, configure, and connect MCPs independently, shifting connection risk and configuration burden away from individual users and into a centrally governed model.

Analyst Recommendation: ConductorOne’s strongest fit is for organizations that see agent security primarily as a governed access problem. Its heritage in identity governance gives it a natural advantage in turning agentic access into something requestable, policy-driven, and reviewable using systems enterprises already understand. The MCP proxy model and prebuilt catalog of over 3,000 MCPs offer one of the most operationally ready paths to governed tool access discussed in these briefings. For buyers approaching the agentic transition through the lens of entitlement management, self-service enablement, and access governance, ConductorOne offers a notably coherent path.

Cyata (Acquired by Check Point)

Overview: Cyata approaches agent security as a control-plane problem for agentic identity, built around the view that agents operate across multiple enterprise surfaces simultaneously and require a unified layer for discovery, governance, and runtime control. Its platform discovers and secures agents across endpoints, browsers, SaaS environments, and cloud infrastructure, reflecting a broader surface area than vendors focused on a single runtime or protocol layer. Cyata’s acquisition by Check Point positions the technology within a broader AI defense platform rather than as a standalone point product.

Discovery: A major strength is the breadth and depth of Cyata’s discovery layer, spanning endpoint-based developer tools, browser-based AI usage, SaaS agent platforms, and cloud-native agent deployments, with attribution back to the human owner of each agent. The platform also reconstructs historical agent activity from artifacts, journals, and existing telemetry, including for agents already operating before security controls were deployed without requiring a proxy in the data path. This retrospective visibility is especially valuable in environments where agents may already be in use but poorly documented.

Deterministic Governance: Cyata provides tool-level governance and policy enforcement across endpoints and browsers through a single control plane that can define and enforce rules across all agent surfaces. MCP risk handling and static policy controls govern which agent capabilities are permitted across the full operating environment.

Non-Deterministic Behavioral Analysis: Cyata’s most differentiated capability is its focus on compound or “toxic” combinations of risk. Rather than treating each finding in isolation, the platform identifies dangerous combinations of posture conditions, agent configurations, credentials, and runtime behaviors that together create materially higher risk, such as agents operating with untrusted MCP servers, static credentials, autonomous configurations without oversight, and privilege inheritance patterns creating escalation risk.

Non-Deterministic Governance: Cyata’s guardian-agent approach is particularly notable: within its MCP proxy flow, the platform requires the agent to provide justification for tool usage and evaluates that justification before allowing the action to proceed. This intent-aware pattern governs not just access but the rationale behind access. Human-in-the-loop approval is also available as an alternative enforcement mode.

MCP Security: Cyata’s MCP proxy model evaluates agent tool requests before execution through either the guardian agent or human-in-the-loop approval. This adds an intent-check layer to tool invocation, making it one of the more explicit examples of AI-mediated intent control in the current market, a meaningful distinction from simpler allow-or-block proxy models.

Analyst Recommendation: Cyata’s strongest fit is for organizations that want broad-surface discovery, rich observability, and context-aware runtime enforcement across agents already dispersed across endpoints, browsers, SaaS platforms, and cloud systems. The toxic-combination detection model offers one of the most distinctive analytical approaches in these briefings, and the guardian-agent intent-aware proxy gives Cyata a stronger claim to true runtime decisioning than static policy models. Its single control plane across surfaces and Check Point integration make it especially compelling for enterprises seeking agent security within a broader security platform rather than as an isolated niche tool.

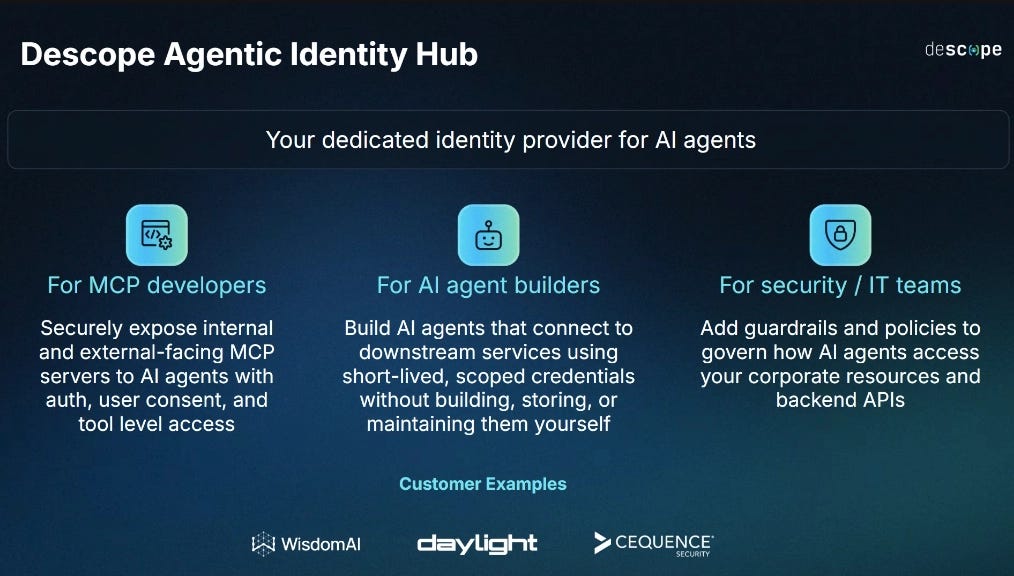

Descope

Overview: Descope is a no-code identity provider whose core business is issuing and managing identities, extended to AI agents through the Agentic Identity Hub. Unlike most vendors in this report, Descope approaches agent security from the developer and builder side: its primary users are teams building MCP servers and AI agents, not security teams deploying runtime controls after the fact.

Deterministic Governance: The value proposition is organized around three distinct users. For teams building MCP servers, Descope acts as the dedicated authorization server, handling user authentication, consent management, client registration, and scope-based access control at the tool level. For teams building AI agents internally using frameworks like LangChain or CrewAI, Descope manages the full credential lifecycle for over 70 out-of-the-box tools including HubSpot, Notion, and GitHub, issuing short-lived scoped credentials so development teams do not have to build their own auth infrastructure. For security and IT teams, Descope’s position as the identity layer enables runtime policy enforcement: a legal team member using Claude to access Salesforce can be restricted to read-only operations on contracts without being able to modify deals, enforced at the identity layer rather than through a separate security tool.

Non-Deterministic Behavioral Analysis: Each agent gets a unique agent ID combining client ID and associated user ID, creating the identity chain of custody that Descope sees as the central problem to solve: when something goes wrong, you need to be able to trace the action back to the agent and the delegating user.

MCP Security: When an agent connects to an MCP server, Descope applies no-code registration controls: accepting only verified clients like Claude or Cloudflare agents, checking originating IP addresses against abuse databases, filtering by geography, and assigning scopes based on verification status. Consent screens surface scope requests to end users before access is granted, with consent stored and time-bounded to the session.

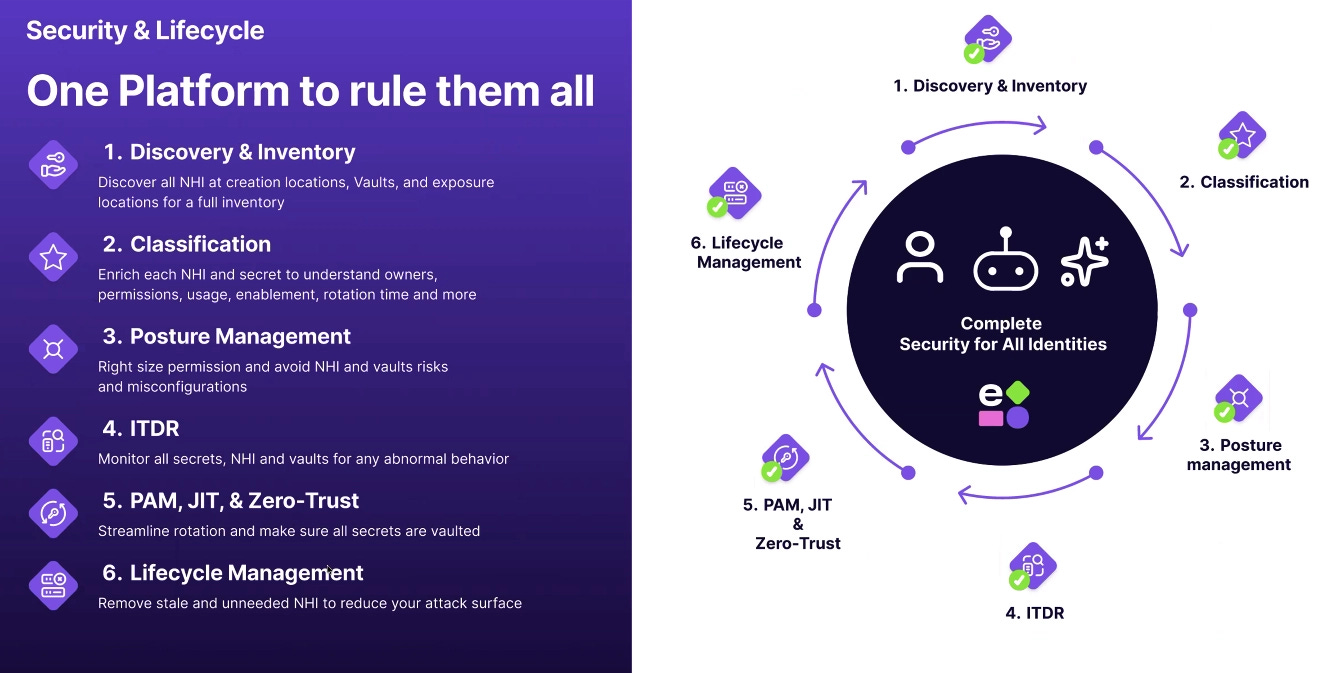

Entro Security

Overview: Entro’s core thesis is protocol-agnostic: agents will always need credentials to access enterprise resources, making the identity layer a universal control surface that works regardless of whether agents use MCP, direct APIs, local tools, or browser automation. This is a direct architectural counter to gateway-based approaches, which only see traffic that flows through them. If an agent needs enterprise access, it needs a non-human identity, and that credential becomes both the discovery signal and the enforcement point.

Discovery: Rather than deploying a new sensor or routing traffic through a proxy, Entro maps agents across multiple surfaces simultaneously: endpoint detection identifies agents running on managed devices, cloud IAM enumeration finds agents operating through cloud identities, SaaS interrogation discovers agents configured inside tools like GitHub, Datadog, and Slack, and network fingerprinting identifies agents by their connection patterns. Every discovered agent is tied back to the human operator who provisioned it, which is the attribution chain that makes every subsequent layer of runtime security possible.

Deterministic Governance: Entro’s most distinctive capability at this layer is the permissionless agent model. Rather than granting credentials that an agent holds persistently, permissions are provisioned on the fly based on policy and automatically revoked after use. Custom policies control which resources an agent can access, and expiration dates can be set on credentials so that time-bounded access is enforced by design. There are no standing credentials to steal or misuse.

Analysis of Non-Deterministic Behavior: For behavioral tracking, the platform detects anomalies against established NHI access patterns, flagging deviations such as access from untrusted locations or mass data encryption attempts. For continuous observability, every credential usage event is logged and tied to the agent, the operator, and the resource accessed. Entro recently launched intent analysis and logging for Claude Code, providing visibility into why an agent is taking an action and creating an auditable record of agent reasoning.

MCP Security: Entro takes a direct-access rather than proxy-based approach. It uses native integrations to monitor and control agent activity, which means strong observability and contextual intelligence with governance enforced through the identity layer rather than through traffic interception.

Analyst Recommendation: Entro is a strong fit for organizations building runtime security on top of an existing NHI program, or for those starting with discovery and deterministic access governance before layering in deeper runtime controls. It is particularly well suited for heterogeneous agent environments where a gateway would only provide partial visibility, and where permissionless just-in-time access is a security priority.

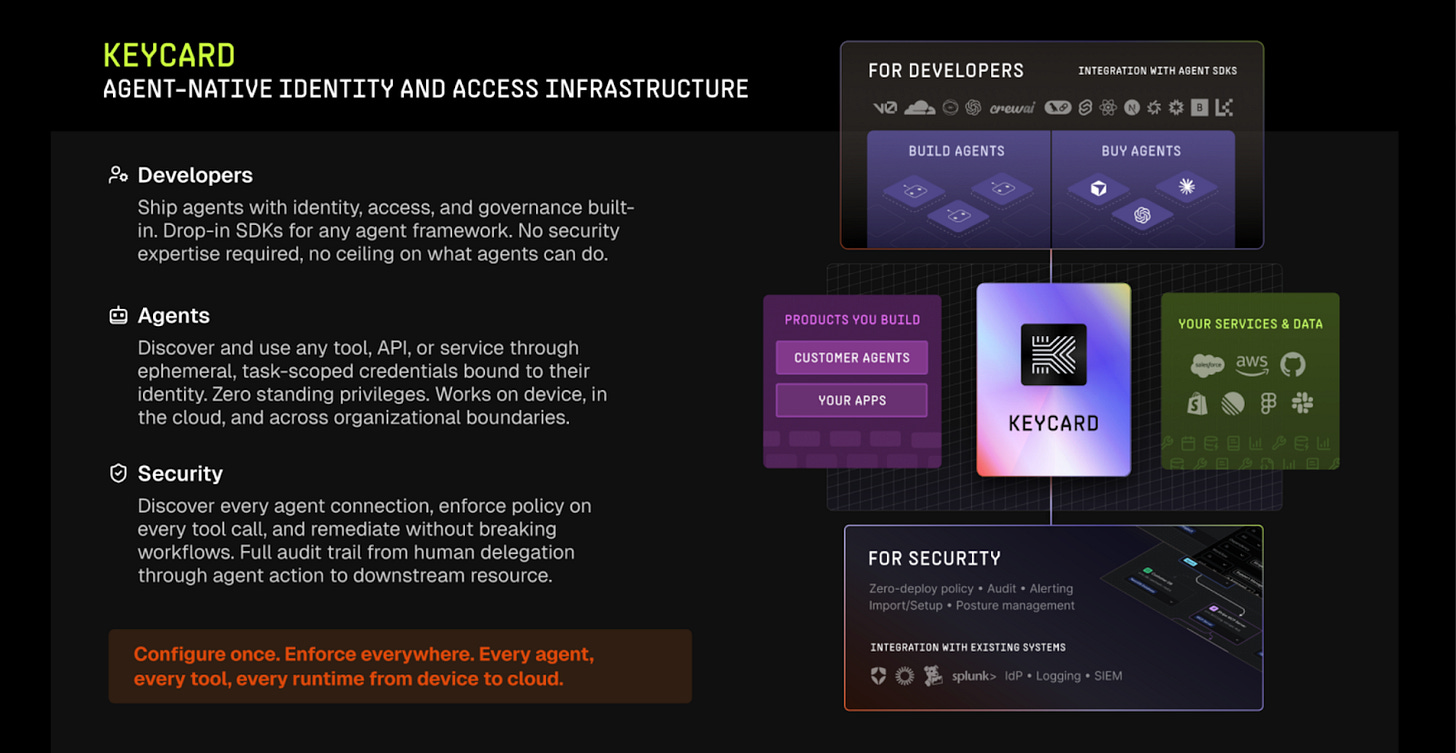

Keycard

Overview: Keycard is a developer-centric vendor building runtime security infrastructure for agents, with a primary focus on making credential issuance itself the enforcement point. Rather than layering controls on top of tokens that were minted at service-account creation time, Keycard issues credentials at the moment of tool calls, when the agent has full context about what it is doing and why.

Discovery: Keycard’s discovery capability is framed as a dependency of its runtime controls, not a standalone product. The platform builds an estate model of the customer environment, a unified data model of agents, their tool inventories, and the resources they can reach. This model is enriched in real time from external sources including SIEM platforms, DSPM providers (such as BigID and Sciera), and supply chain security tooling. The result is a continuously updated map of what agents exist, what tools they use, and what the current risk posture of those tools is, including whether a CLI, MCP server, or package has known vulnerabilities. The design philosophy here is that discovery and enforcement that fires without a sufficient data model produces noise rather than signal, policies must be enforced from a unified data model and estate. For Keycard, discovery is not a box to check at deployment. It is the substrate on which runtime decisions are made.

Deterministic Governance: Keycard aims to support both human-in-the-loop and autonomous operation models, and the platform is designed to navigate the tension between them. The team explicitly identifies consent fatigue as a design constraint: excessive approval requests dilute the signal for decisions that genuinely require human judgment. The system is built to learn over time where approvals have historically been granted, and to surface only the approval requests that carry real decision value, eventually proposing autonomous policy updates for patterns that have been consistently approved.

Dynamic termination and revocation capabilities are in active development. The target state allows the platform to pause a session mid-execution, revoke access to a specific sub-session or reasoning branch, or terminate entirely based on real-time risk signals. Staged policy rollouts, impact analysis against historical resolution patterns, and rollback mechanisms are also on the near-term roadmap.

In terms of agent observability, Keycard captures two complementary audit streams at the point of credential issuance: the agent’s own explanation of its intent (what it believes it is doing and why), and the full lifecycle trajectory of tool calls leading to that moment. These streams are combined into a session-level record that attributes every access decision to a specific agent, session, and reasoning chain. The architecture provides the audit trail that compliance and incident investigation teams require, and it forms the input layer for Keycard’s authorization logic.

Session hierarchy is explicit in the platform. Keycard maintains a tree structure of agent sessions and sub-sessions, which is particularly relevant for long-running multi-agent chains where specialized agents hand off to one another. This enables selective action, security teams can revoke a specific reasoning branch or sub-session without terminating the parent workflow

Non-Deterministic Behavioral Analysis: Keycard sees behavioral analysis as a key part in building its non-deterministic governance layer. The current architecture captures the inputs needed for behavioral baseline construction: session trajectories, tool call sequences, credential request patterns, with a focus on autonomous drift detection and risk scoring are near-term development priorities rather than current capabilities.

Non-Deterministic Governance: This is Keycard’s primary differentiation. At tool call time, the platform evaluates authorization using dual context: the agent’s stated reasoning and the cumulative trajectory of its session. An agent that has retrieved a single customer record presents a materially different risk profile than one whose session history shows progressive lateral movement, even if both actions are within its nominal permissions. Keycard’s policy engine evaluates this combined signal to dynamically grant or withhold credentials for that specific tool call, rather than relying on static scopes set at deployment.

Policy authorship is layered across stakeholders. Agent builders can introduce guardrails scoped to their specific agents. End users can impose constraints such as budget limits. Security teams define organizational risk posture. All layers are resolved before credential issuance, with evaluation flowing from resource level up through user level. Notably, Keycard is designing its policy language to be readable and writable by agents themselves, reflecting an expectation that at agentic scale, autonomous policy management becomes necessary.

MCP Security: Keycard addresses MCP security through two mechanisms. First, its supply chain integration can block tool calls to MCP servers with known vulnerabilities, using data ingested from security tooling at the estate modeling layer. Second, because credential issuance happens at the tool call boundary, the enforcement point is MCP-aware by design, the same intent evaluation and lifecycle audit that governs any tool call applies directly to MCP server interactions. This is a structural approach rather than an MCP-specific feature layer, meaning coverage extends naturally as the MCP ecosystem grows without requiring vendor-specific integrations for each server.

Analyst Recommendation: Keycard is best suited for organizations who want to instrument the credential layer for agents from the ground up, rather than retrofitting controls onto existing token architectures. The platform is developer-first in its UX and instrumentation (accessible via CLI or UI), which makes it a practical fit for teams where developers and security engineers work in close collaboration. The current product is most relevant to security and identity engineering teams who are designing agent infrastructure at early stages and want the enforcement point to be correct before scale.

Microsoft (Entra Agent ID / Agent 365)

Overview: Microsoft approaches agent security from a position no other vendor can easily replicate: it is building for an internal environment where agent deployment is already occurring at enormous scale, with visibility into 500,000 agents. Entra Agent ID gives agents a first-class identity inside the Microsoft Entra directory, allowing them to participate in the same identity, access, governance, and protection workflows enterprises already use for human and application identities. Agents are represented as unique identity objects, OAuth-compatible, supporting both autonomous flows and on-behalf-of-user flows.

Discovery: Because agents live in the same Entra directory as users and applications, Microsoft provides directory-native discovery across agent populations. The blueprint model acts as reusable templates for creating and governing categories of agents consistently, allowing organizations to define baseline permissions, access boundaries, and management rules for a class of agents rather than configuring each one independently. Every agent is expected to have a sponsor, with governance workflows tracking sponsorship, managing orphaned agents, and automating reassignment or containment as ownership changes.

Deterministic Governance: Agents can be included in conditional access policies, governance workflows, lifecycle automation, and audit systems natively within the directory plane. Its Blueprints architecture acts as reusable templates for creating and governing categories of agents consistently, allowing organizations to define the baseline permissions, access boundaries, and management rules for a class of agents rather than configuring each one independently. Access-package approval flows, sponsorship lifecycle management, and just-in-time consent provide layered deterministic controls.

Non-Deterministic Behavioral Analysis: Entra audit trails, directory-native metadata, and the ability to distinguish agent activity from other identity activity support continuous observability. In addition, Microsoft’s roadmap includes ML-driven agent risk detection to deepen behavioral analysis capabilities over time.

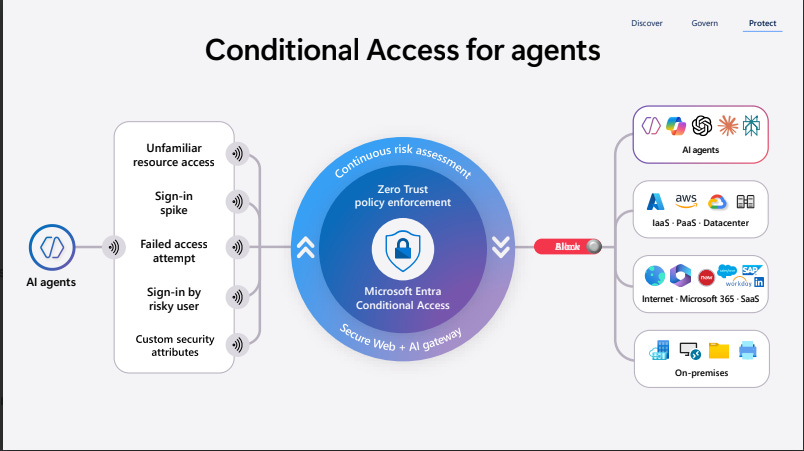

Non-Deterministic Governance: Conditional access for agents, real-time policy controls, and risk-based enforcement enable dynamic governance decisions. The platform uses a shared policy engine informed by risk signals across identities, devices, networks, and activity, with the ability to contain or quarantine risky agents based on live conditions.

MCP Security: Current capabilities center on server-level discovery and network blocking through Agent 365’s MCP discovery features. Microsoft also applies secure web and AI gateway controls for URL filtering, threat-intelligence filtering, file-transfer restrictions, and prompt-injection protection on agent traffic. The roadmap aims to bring tool-level MCP controls into the same policy engine.

Analyst Recommendation: Microsoft’s strongest differentiation is building agent security as a native extension of a very large existing identity and security platform. The blueprint model offers one of the clearest governance-at-scale approaches discussed in these briefings, and the sponsorship lifecycle model provides a direct answer to enterprise accountability requirements. For organizations already invested in Microsoft 365, Azure, Entra, Defender, and Purview, agent identity, governance, protection, and network controls come together in one operational model, making Microsoft especially compelling where scale and platform consolidation are priorities.

Noma Security

Overview: Noma Security approaches agent security from the premise that effective enforcement cannot rely solely on protocol-layer choke points such as MCP gateways. Its core argument is that a meaningful portion of agent activity occurs outside of MCP altogether, whether through direct API calls, cloud-native service integrations, or increasingly through skills-based execution patterns that do not traverse an MCP control plane. From that perspective, Noma positions runtime instrumentation and hooks-based enforcement as the more durable control layer, particularly for organizations operating across a mix of homegrown agents, SaaS agent platforms, and workforce-facing agent tools.

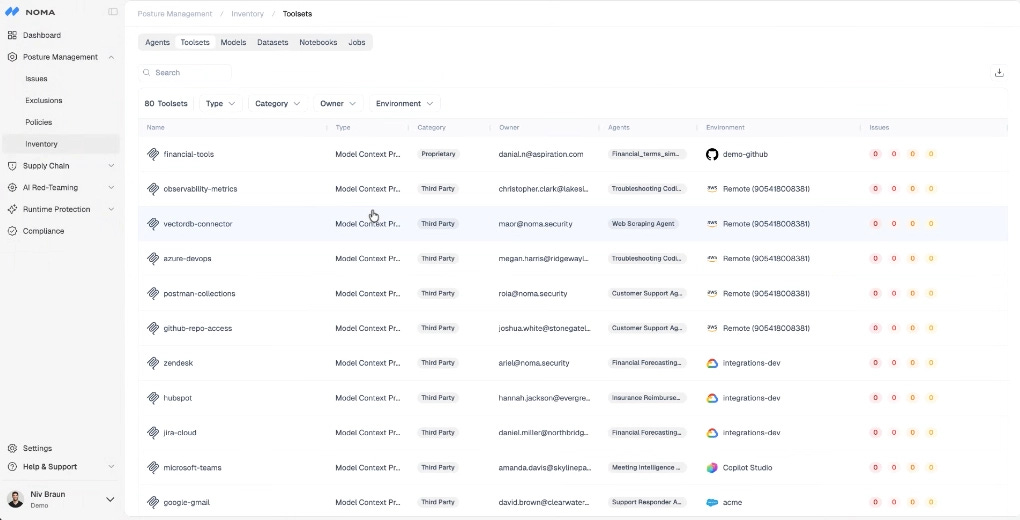

A useful aspect of Noma’s framing is its segmentation of the market into three agent categories: homegrown agents built by internal engineering teams, SaaS agent platforms where business users can create and share agents, and local or workforce-facing agents used directly by employees. This taxonomy is practical because the identity and control problems differ across each category even when the underlying security principles remain consistent. In SaaS agent platforms, for example, Noma places particular emphasis on what it describes as the maker’s identity problem: an agent created with static access tied to its creator can continue to operate with that creator’s privileges even when shared more broadly. That makes creator identity, delegated access, and agent ownership central to the governance model.

Discovery: Noma’s discovery and visibility layer is designed to capture not only agents themselves, but also the surrounding context that determines their risk. The platform inventories agents, models, tools, MCP servers, data sources, triggers, users, and agent-to-agent relationships, with an emphasis on showing how these elements connect rather than presenting them as isolated artifacts. It also appears to draw on a broad integration footprint across cloud services, code repositories, notebook environments, SaaS agent platforms, and endpoint telemetry. That contextual approach is important to Noma’s broader positioning: the company is not simply trying to enumerate agents, but to understand how they are constructed, what they can reach, who can invoke them, and how they interact with other systems.

Deterministic Governance: At the deterministic governance layer, Noma’s focus on controlling tools, MCP usage, and skills-based execution paths makes it well positioned for environments where static allow-or-deny rules are too blunt to preserve usability. Noma’s layered behavioral signals are precisely the kind of data that non-deterministic governance depends on, meaning the platform is well positioned to feed dynamic policy decisions as organizations mature into the third layer of the framework.

Moma’s strongest differentiation appears in runtime enforcement. Its model operates across three analytical layers: content, context, and behavior. At the content level, the platform evaluates whether a given action is inherently risky or sensitive. At the context level, it evaluates whether the action is consistent with the agent’s stated purpose and current session context. At the behavior level, it compares the session against historical patterns to detect drift, anomaly, or combinations of actions that create risk over time. This layered structure is particularly well suited to agent security because many meaningful failures do not arise from a single obviously malicious action, but from an accumulation of individually permissible steps that together produce an unsafe outcome.

Noma also appears to benefit from strong market momentum and ecosystem validation. Its partnerships with major cloud and platform providers, along with its visibility in large-enterprise deployments, reinforce the view that the company is being treated as a serious platform in the emerging agent-security market rather than as a narrow point solution. Just as importantly, the company’s broader platform architecture appears designed to let posture, runtime, and context enrich one another over time. That bidirectional contextualization, using posture data to inform runtime decisions and runtime data to improve posture recommendations, gives the platform a coherent structure for scaling as organizations move from early agent experimentation to much larger agent populations and more complex agent-to-agent systems.

Non-Deterministic Behavioral Analysis: For behavioral tracking, the platform compares each session against historical baselines to detect drift, anomaly, or combinations of actions that individually look permissible but together produce an unsafe outcome. For continuous observability, the platform captures session-level context including instructions, tool usage, and behavioral history, giving teams a detailed picture of what an agent is doing and why at any point in time.

MCP Security: Another notable aspect of Noma’s positioning is its critique of MCP gateway-centric architectures. The company’s argument is not simply that gateways are incomplete, but that they operate too far from the agent’s actual reasoning context to support high-quality runtime decisions. By contrast, hooks-based enforcement allows the platform to observe more of the session itself, including instructions, tool usage, contextual signals, and behavioral history. That deeper visibility enables more dynamic controls and, in Noma’s framing, allows organizations to be more permissive where appropriate because enforcement can be based on richer context rather than static policy alone.

Analyst recommendation: Overall, Noma’s strength lies in treating agent security as a runtime control problem that must account for purpose, behavior, and execution context rather than just protocol mediation or static identity assignment. For organizations with meaningful exposure to developer agents, workforce-facing agent tools, or heterogeneous agent environments where not all activity will route through MCP, Noma offers a runtime architecture that appears well matched to how agent behavior is actually evolving.

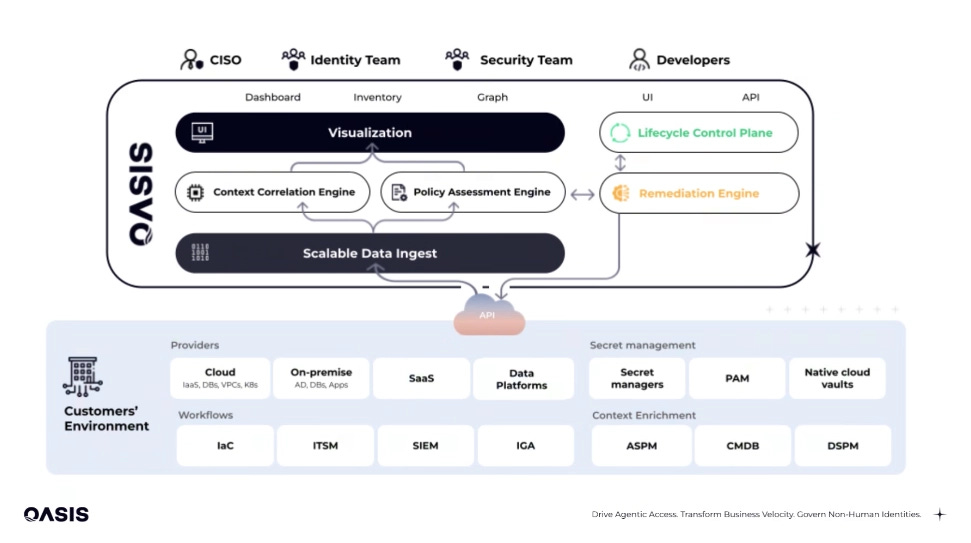

Oasis Security

Overview: Oasis is built on a specific architectural thesis: MCP is becoming the standard protocol through which agents access enterprise resources, which makes the MCP gateway the optimal enforcement point. Rather than monitoring identity signals at the perimeter, Oasis sits directly in the communication path between agents and the resources they consume. Instead of following the credentials, Oasis intercepts the conversation.

Deterministic Governance: The platform analyzes incoming access requests, applies least-privilege policies, and generates temporary identities scoped to each session without relying on standing credentials. Those identities are decommissioned automatically when the session ends. Policies are enforced using OPA, producing auditable decisions rather than probabilistic ones. A hybrid deployment model keeps the customer-side component within the organization’s own perimeter, addressing data residency requirements without sacrificing centralized policy control.

Analysis of Non-Deterministic Behavior: For behavioral tracking, the platform tracks sequences of actions across tools and builds a contextual picture of agent activity across a session. For continuous observability, full session-level logging of every brokered connection is built into the gateway layer, capturing what the agent accessed, when, and under what context.

Non-Deterministic Governance: This is where Oasis introduces one of the more novel concepts in the market: the MCP firewall. Unlike traditional firewalls that make binary allow-or-deny decisions based on static rules, the MCP firewall uses behavioral and intent signals accumulated during the session to make dynamic access decisions. If an agent reads confidential data from Salesforce and then requests access to Gmail, the firewall identifies that sequence as a potential exfiltration risk based on data classification and blocks it, even though each individual action would have been permitted in isolation. This cross-tool, sequence-aware enforcement is something static policy models structurally cannot do, and it represents a meaningful step toward genuinely non-deterministic governance at the MCP layer. For intent-based authorization, Oasis performs analysis of agent intent as part of each request evaluation, providing context about why an agent is making a request alongside the access decision. Control and escalation through human-in-the-loop approval flows for high-risk actions is on the near-term roadmap.

MCP Security: Oasis detects hard-coded secrets in MCP server configurations and identifies supply chain risks within the broader MCP ecosystem, reflecting a complete view of how MCP threats materialize in practice.

Analyst Recommendation: Oasis is a strong fit for organizations standardizing on MCP as their agent-to-resource protocol, particularly where cross-tool data exfiltration is a primary concern and where deterministic, auditable policy enforcement is a compliance requirement.

Okta

Overview: Okta’s approach to agentic security operates from a fundamentally different starting position than every other vendor in this report: it is already the identity provider. With 19,000 customers managing human and service-based account workloads, Okta is not building a new security layer but extending the existing identity fabric to treat agents as first-class identities alongside human users. The technical centerpiece is the Identity Assertion Grant (ID-JAG), an open standard Okta co-developed with other identity providers for agentic workflows, ensuring an agent’s permissions are always bound by the specific user’s existing access rights.

Discovery: A dashboard accessible through Okta Identity Security Posture Management (ISPM), now generally available, ensures discoverability across all AI agent platforms. The Secure Access Monitor (SAM) plugin, currently in Early Access, monitors the user’s browser for new OAuth grants, capturing Claude Code and all calls to MCP servers that require an OAuth grant. Additionally, Okta will soon have the ability to intercept and secure traffic from MCP clients like Claude Code and Cursor, providing end-to-end coverage.

Deterministic Governance: Authorization operates at three tiers. User context constrains the agent to the permissions of the human operator. Coarse-grained scopes define what categories of actions the agent can perform. Fine-grained authorization through fga.dev enables policy-level control over individual resources and operations. The certification campaign capability enables proactive review of agent access rights, applying the same governance rigor to agents that organizations already apply to human entitlements.